Victoria's Alcohol and Other Drug Treatment Data

Snapshot

Is the Department of Health’s (DH) Victorian Alcohol and Drug Collection (VADC) dataset high quality and achieving its intended benefits?

Why this audit is important

In 2020–21, over 40,000 Victorians accessed alcohol and other drug (AOD) services through DH-funded providers. DH needs accurate data to make sure these services are effectively helping Victorians.

Who and what we examined

We looked at the quality of VADC data and how DH uses it to:

- plan AOD treatment services

- manage AOD service providers’ performance.

We audited DH, the Department of Families, Fairness and Housing, and 4 AOD service providers.

What we concluded

Data in the VADC does not accurately represent what service providers are doing for their clients.

DH is responsible for the quality of VADC data and is working to improve it.

However, there is a risk that DH's improvement program will not address all the root causes of the data quality issues, including service providers' limited data capabilities and capacities, and variation in the information technology (IT) systems they use to collect data.

Poor-quality data limits DH’s ability to use it to plan services and manage providers' performance. It also means that service providers need to incur significant costs to address data quality issues.

What we recommended

We made 4 recommendations to DH about improving VADC data quality.

Video presentation

Key facts

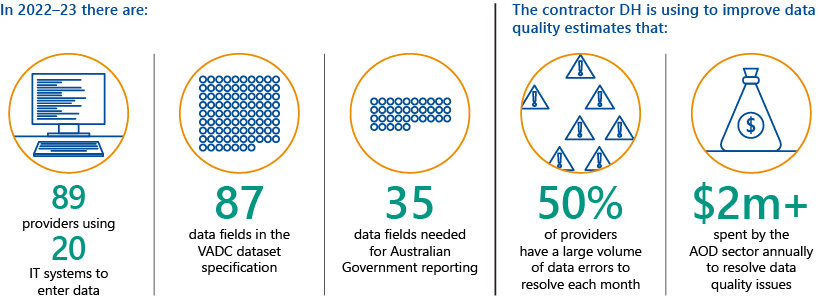

Source: VAGO, based on information from DH and the Australian Institute of Health and Welfare.

What we found and recommend

We consulted with the audited agencies and service providers and considered their views when reaching our conclusions. Their full responses are in Appendix A.

VADC data is not high quality or achieving its intended benefits

A dataset specification is a set of data elements, concepts and validation rules for a data collection.

For example, to register a new member, an organisation might need their full name, date of birth, physical address and email address. It might also require this data in certain formats, such as DD/MM/YYYY.

A CMS is a software platform that an organisation uses to enter and manage data about its clients. AOD service providers use CMSs to store information about their clients and the treatment they receive.

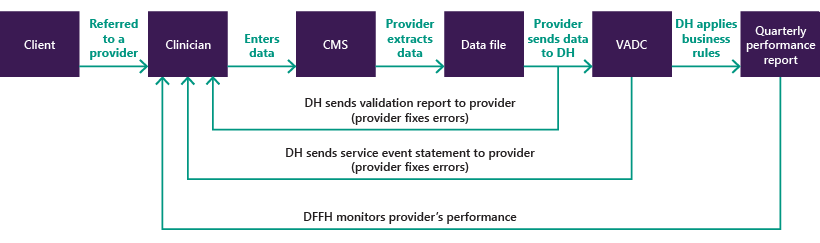

Service providers collect data for the VADC in their CMS. They then extract the data each month and upload it to a DH portal.

An IT vendor is a company that provides software to an organisation. In the context of this audit, IT vendors provide the CMS software that service providers use to collect VADC data.

A data collection model is the process for gathering data for a data collection.

Victorian Alcohol and Drug Collection (VADC) data is not high quality. This limits how the Department of Health (DH) can use it to plan alcohol and other drug (AOD) services and manage service providers’ performance.

While DH's work to improve the VADC is positive, it might not address the root causes of the data quality issues, including:

- the dataset specification’s complexity

- service providers' different data capabilities

- variation in service providers' client management systems (CMSs), which are the information technology (IT) systems they use to enter data.

DH’s planning has had ongoing impacts on data quality

We assessed DH’s planning for the VADC against the Australian Digital Health Agency’s (ADHA) 2020 A Health Interoperability Standards Development, maintenance and Management Model for Australia (Standards Development Model).

The Standards Development Model describes:

- 8 characteristics of high-quality datasets

- 12 better-practice principles for developing health data specifications

- the impact of using poor practices.

We found that DH fully met only 5 of the 12 better-practice principles when it planned the VADC. In particular, DH did not:

- sufficiently consult with service providers and their IT vendors

- effectively manage the risk that service providers' different data capabilities and CMSs could impact data quality.

DH did not sufficiently consult with service providers and their vendors

DH chose to develop a standalone specification as the data collection model for the alcohol and other drug sector.

DH based this choice on its strategic priorities, which we explain in Section 2.1.

A standalone specification means that DH tells service providers what data they need to collect and how they need to submit it. It is up to service providers to follow these requirements using their own CMSs.

When it was planning the specification in September 2016, DH ran a survey that found 70 per cent of service providers would need to overcome resource, capacity or budget constraints to implement it. However, it is not clear if this feedback affected DH’s planning.

DH also did not consult with service providers' IT vendors on the specification. This is an issue because vendors would need to adapt their CMSs so that service providers collect the right data.

DH did not run an information session with vendors until June 2018, which was just 4 months before it planned to implement the VADC. This meant that vendors had to address issues with the specification during the VADC's implementation.

DH did not effectively manage the risk that service providers' data capabilities and CMSs could impact data quality

When it was planning the VADC, DH identified the risk that service providers might submit poor-quality data due to their:

- different data literacy levels and capabilities

- inadequate CMSs.

DH did not always recognise the severity of these risks.

For example, in 2015 DH anticipated it was highly likely that service providers would submit data that would not meet its requirements. However, it only gave a medium risk rating to the IT capability and capacity issues it identified that directly contributed to this risk.

DH's mitigation strategies for these risks focused on communicating with service providers and using data validation controls to check submitted data. But these were not enough to stop these issues from occurring and becoming the root causes of the VADC's data quality issues.

The VADC data specification and data collection model are too complex for service providers

An internal review DH did in December 2020 found that many service providers have immature IT and data capabilities.

Service providers and vendors we spoke to confirmed that this is an ongoing issue.

In particular:

- service providers' CMSs vary in how well they help them collect high-quality data

- the dataset specification is too complex for them to use because it:

- has a large number of data elements to report on and possible errors

- is written in technical language.

As a result:

- VADC data does not always reflect service providers’ activities

- service providers need to incur substantial costs to meet the VADC’s requirements.

CMSs vary in how well they help service providers submit high quality data

Currently service providers submit VADC data using at least 20 different CMSs. These CMSs differ in their ability to prevent, identify and correct data quality issues.

For example, a CMS might lack data validation controls or not be able to run internal reports to help the provider check if its VADC submissions accurately reflect the services it provided.

Some service providers also rely on their IT vendor to help them understand what they need to do to meet the VADC's requirements. However, providers do not always get the support they need.

The dataset specification has a large number of data elements to report on and possible errors

The dataset specification has 87 data elements. The Australian Institute of Health and Welfare requires DH to collect 35 data elements for national reporting.

The dataset specification also has 139 possible errors or warnings that service providers might need to fix in their monthly data submissions. For example:

- missing data

- incorrect data combinations

- data that is outside a specified range.

In September 2021, the contractor DH appointed to improve the quality of VADC data estimated that 50 per cent of service providers have a large number of errors they need to fix each month.

This suggests that many providers do not have the capability or capacity to meet the dataset specification's requirements.

The data specification is written in technical language

Service event statements inform service providers about the data they have submitted and if their activities count towards their performance targets.

The dataset specification is written in technical language that is difficult for the clinicians who enter the data to understand.

DH has published a plain language version of the funding rules and an information sheet for service event statements. It has also added code descriptions to service event statements. These are improvements but are still unlikely to give enough information for users with low data literacy.

This means that some providers rely on their vendor to help them understand what data they need to collect.

VADC data does not always reflect service providers’ activities

We found that all 4 audited service providers either:

- had internal records of activities that did not match their performance reports from DH, or

- could not produce reports to allow us to check if their performance reports are correct.

Service providers also told us of instances where they knew the data they submitted did not fully reflect the services they provided.

Service providers need to incur substantial costs to meet the VADC’s requirements

DH does not require service providers to have staff with specialised data skills. However, due to the complexity of the dataset specification and data collection model, some service providers hire staff with these skills to help them collect and submit high-quality data.

Service providers vary in size and some do not have the resources to do this though. Even larger providers who do have these skills still find it difficult to check if their VADC data reflects the services they delivered.

The 4 audited providers estimate that they have collectively spent at least $2 million on VADC compliance since DH introduced it.

This is well over the $280,000 in grants they received from DH for this purpose. Providers could also use some of these grants for IT equipment to support telehealth and remote service delivery.

In September 2021, DH's contractor estimated that the AOD sector spends at least $2 million a year to fix data quality issues, including $1.8 million by service providers.

This means that service providers have less funds to spend to deliver their services.

Data quality issues limit how DH can use VADC data to plan services and manage performance

DH uses VADC data to monitor AOD services. The Department of Families, Fairness and Housing (DFFH) also uses it to monitor service providers’ performance on DH's behalf.

However, data quality issues limit its reliability for these purposes.

DH does limited monitoring of statewide AOD services using statistics based on VADC data. It does not use VADC data for demand modelling.

DH also planned to use VADC data to:

- help it develop performance indicators for service providers based on client outcomes

- continue developing AOD funding models.

It has not used VADC data for these purposes.

DH and service providers' data quality controls are not enough to ensure high-quality data

DH’s controls

DH is responsible for the VADC's data quality. DH uses its own data validation controls to ensure that service providers' submitted data meets its requirements.

DH checks if service providers submit data every month and follows up with providers who are late.

DH also does occasional manual checks to analyse data quality. For example, checking if service providers have submitted unusual data.

DH has an annual process to change the VADC specification. It seeks feedback from service providers and vendors during this process. DH also suggests changes on behalf of the AOD sector. These changes are likely to improve data quality over time.

A data quality statement assesses a dataset's quality against certain criteria. It also assesses how suitable it is for its intended use and other potential uses.

A data quality management plan outlines known quality issues with a dataset and how the data owner plans to address them. It also lets the data owner track results over time.

May 2022, DH published the VADC's first data quality statement as part of the 2022–23 specification. However, it has not published a data quality management plan, which the Department of Premier and Cabinet’s (DPC) 2018 Data Quality Guideline: Information Management Framework (Data Quality Guideline) requires.

Service providers’ controls

DH recommends that service providers have certain data quality controls, such as reviewing data errors regularly and using the resources that DH provides to support data quality.

We found that audited service providers usually have these controls.

However, their data still does not reflect the services they deliver. This suggests that these controls are not enough to make sure providers' data is high quality.

DH is working to improve data quality but this may not be enough for all service providers

Following its 2020 internal review, DH started an improvement program to help service providers meet the VADC’s requirements.

This program includes training for service providers and improving how DH communicates with the sector.

We assessed the VADC and DH's proposed improvements against ADHA's 8 characteristics for high-quality datasets. We found that DH's improvements are likely to:

- fully address one characteristic

- partially address 6 characteristics

- not address one characteristic.

This suggests that the program will likely improve VADC data to an extent. However, there is a risk that it will not help all service providers submit complete and accurate data.

This is because the specification will still be too complex for all service providers to use.

Service providers will also still need to choose their own CMS regardless of how well it helps them submit high-quality data.

The Royal Commission into Victoria's Mental Health System

DH received funding in 2022–23 for a new data collection system for the mental health sector in response to the Royal Commission into Victoria's Mental Health System. This includes a mental health and wellbeing record and a mental health information and data exchange, which will be able to send DH data in real time.

DH told us that it plans to include the AOD sector in this project in 2026.

Recommendations about improving VADC data quality

| We recommend that: | Response | |

|---|---|---|

|

Department of Health |

1. revises the Victorian Alcohol and Drug Collection specification and user guides in consultation with service providers, clinicians and vendors to minimise the burden of collecting data by:

(see sections 2.3, 3.2 and 3.3) |

Accepted |

|

2. establishes a minimum set of functional requirements for a Victorian-Alcohol-and-Drug-Collection-compliant client management system and explores options to work directly with vendors to ensure they comply with these requirements (see sections 2.3, 3.2 and 3.3) |

Accepted |

|

|

3. advises the Minister for Health on options and recommendations to amend the Victorian Alcohol and Drug Collection's data collection model so all service providers are supported to effectively and efficiently meet the specification's requirements. In developing options, it should consider:

|

Accepted |

|

|

4. manages the Victorian Alcohol and Drug Collection in line with the Department of Premier and Cabinet’s Data Quality Guideline: Information Management Framework, including maintaining an up-to-date data quality management plan and data quality statement (see Section 3.2). |

Accepted |

1. Audit context

In 2022–23, DH will pay $272.5 million to service providers to deliver AOD assessment, treatment and support services in Victoria. These services include counselling, withdrawal services and rehabilitation.

In 2016, DH developed the VADC to better align its data collection requirements with providers’ services and funding arrangements. DH started using the VADC for data reporting in July 2018.

This chapter provides essential background information about:

1.1 Government-funded AOD services in Victoria

The Victorian Government funds 89 service providers to deliver treatment services to people experiencing AOD-related harm and their families and carers.

Service providers include non-government organisations, hospitals, community health services and primary health networks.

Providers can be standalone or work in a group with other providers to deliver services, which is known as a consortium arrangement.

Figure 1A lists the services that the Victorian Government funds these providers to deliver to clients

FIGURE 1A: AOD treatment services

| Service type | Service Activity |

|---|---|

| Presentation | Intake |

| Residential pre-admission engagement | |

| Assessment | Comprehensive assessment |

| Treatment | Residential withdrawal |

| Non-residential withdrawal | |

| Counselling | |

| Ante and post-natal support | |

| Residential rehabilitation | |

| Therapeutic day rehabilitation | |

| Care and recovery coordination | |

| Outreach | |

| Client education program | |

| Outdoor therapy (youth) | |

| Day program (youth) | |

| Supported accommodation | |

| Support | Brief intervention |

| Bridging support | |

| Review | Follow-up |

Source: VAGO, based on DH’s Victorian Alcohol and Drug Collection (VADC) Data Specification 2022–23.

Accessing AOD services

Intake services are the main way that new clients enter the Victorian AOD treatment system. Intake services also help new and existing clients move through the system by prioritising treatment based on clinical judgement.

DirectLine provides a 24-hour telephone counselling, information and referral services for anyone in Victoria who wants to discuss an alcohol or drug-related issue.

AOD service providers work in 16 catchment areas. A group of providers lead each catchment area and manage the intake services for clients in that region.

By centralising the intake system, catchment planners can understand demand and waitlists in their area.

Voluntary clients can access treatment services through the DirectLine telephone line or website. A client can also directly contact the local intake provider for their catchment area.

The Australian Community Support Organisation‘s Community Offenders Advice and Treatment Services program assesses clients who access AOD services due to contact with the criminal justice system. They can also refer clients to other service providers for treatment.

Agreements with service providers

DH manages the state’s AOD system, including policies, funding, service planning and its overall performance.

Each service provider has a service agreement with DH that sets out:

- the type and volume of services DH requires it to deliver

- DH’s data collection requirements.

Through an agreement with DH, DFFH monitors service providers’ performance against their targets.

Funding for service providers

The AOD sector uses 2 funding models:

- the drug treatment activity unit (DTAU)

- the episode of care funding unit.

DTAU funding

The Victorian Government introduced DTAU in 2014 as a new funding model for AOD service providers.

Residential services provide a safe environment for drug or alcohol withdrawal or rehabilitation in a community-based setting.

The government uses DTAU to fund residential services and most adult non residential services.

DTAU has a set price that the government indexes annually. The amount of funding service providers get is based on their agreement with DH and paid in advance.

For example, DH might fund a service provider to deliver 1,500 DTAU of adult counselling services in the next year. As the service provider delivers these services they accrue DTAU against their targets.

The amount of DTAU they receive against their targets changes depending on the type of service they deliver and the type of clients they treat.

Episodes of care funding

Government-funded youth and Aboriginal and Torres Strait Islander non-residential AOD services still use the former episodes of care funding unit.

DH counts these units against service providers' performance targets when a client achieves at least one significant treatment goal, such as completing a counselling program.

DH expects providers to achieve 95 per cent of their total DTAU or episodes of care targets. If a provider consistently misses these targets, DH can adjust the provider’s funding and targets.

1.2 Data collection for AOD services

The Victorian Government requires providers to submit data to the VADC monthly about:

- services the Victorian Government funds

- services the Australian Government funds, in some circumstances.

Service providers do not need to report on services that individuals or the private sector funds. In this audit, we only look at services that the Victorian Government funds.

Appendix D lists the data elements that DH collects. DH intends to use this data to:

- monitor providers’ performance

- evaluate AOD programs

- help it develop and improve services across the state

- inform catchment-based planning, demand modelling and research into the AOD sector.

Alcohol and Drug Information System (ADIS)

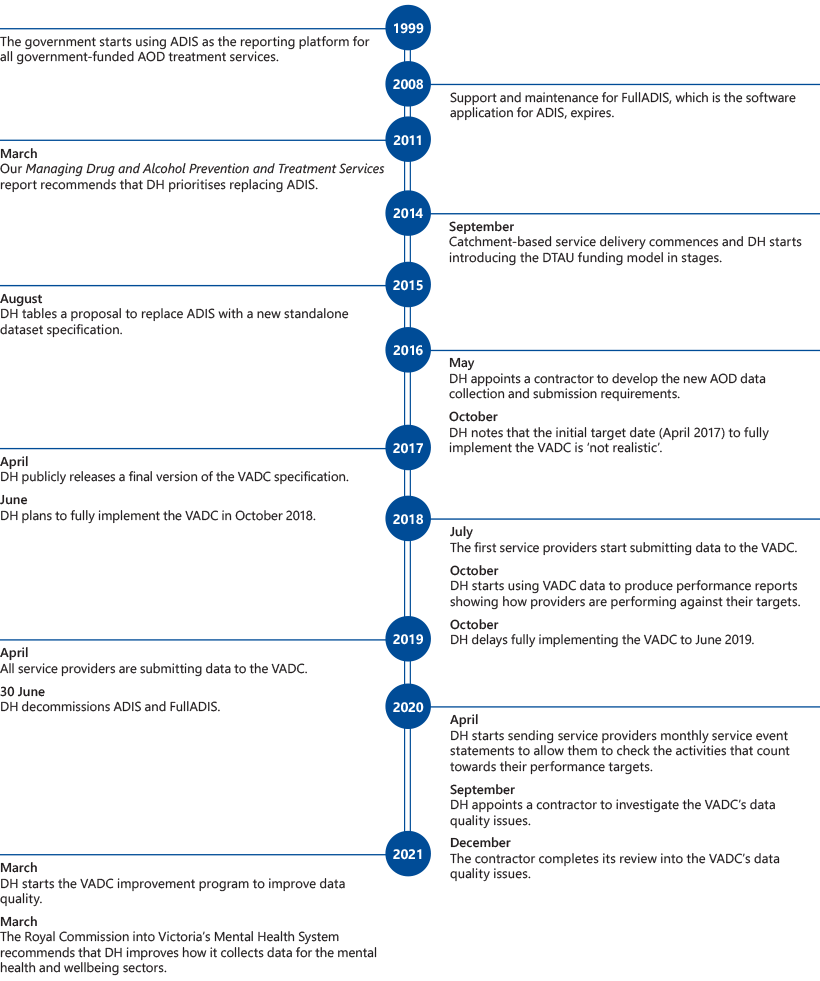

Prior to the VADC, DH's data collection system for the AOD sector was called ADIS. DH used ADIS from 1999 to 2019. It stopped using it because:

- support and maintenance for FullADIS, the software platform service providers used to submit data, stopped in 2008 (this made it a legacy system with no further updates)

- DH received negative feedback from service providers about the burden of collecting data (ADIS required service providers to report 264 data elements to DH)

- the system no longer met the government’s data requirements for service delivery, funding and managing performance after it reformed the AOD sector in 2014.

As a result, DH required service providers to send an Excel spreadsheet with extra data on top of their submissions through FullADIS.

This made it take much longer to report data and DH found that it still was not getting enough detail to fully monitor demand for services.

Data collection redevelopment project

In August 2015, the former Department of Health and Human Services (DHHS) chose to develop a standalone dataset specification to replace ADIS. This would make service providers responsible for meeting the government’s data collection requirements using their own CMS.

Service providers needed to work with their vendor to customise their CMS so it met the dataset specification's requirements. Some providers also needed to start using a CMS for the first time.

To support this work, DH received funding of $4.1 million over 4 years from 2017–18 to 2020–21 to:

- help it introduce a new data collection, which is now known as the VADC

- support service providers during the transition

- create data-sharing infrastructure so DH could see all AOD clients in the government-funded system and to allow providers to share client data.

In July 2018, DH decided not to progress with the data-sharing infrastructure. This was because it realised it would not be legally possible without clients’ consent to share personally identifiable information with DH.

By April 2019, all service providers had transitioned to submitting data through the VADC.

Figure 1B summarises the process that service providers use to enter, submit and correct data.

FIGURE 1B: VADC data submission process

Source: VAGO, based on DH's internal review of the VADC.

In 2021, the Victorian Alcohol and Drug Association reported in its VADC impact report that service providers found the VADC dataset specification overly complex. It also found that staff at service providers needed to spend a significant amount of time completing, correcting and submitting data to the VADC.

VADC review project

After DH fully implemented the VADC, the data for 2019–20 suggested that service providers were underperforming.

DH engaged the same contractor that developed the VADC dataset specification to find out if this was due to data quality issues.

This review, which the contractor finished in December 2020, found that the main cause of the apparent underperformance was clinicians entering incorrect data into their CMS. It suggested the main reasons for this were:

- DH did not adequately prepare service providers to follow the new dataset specification

- service providers’ CMSs were not supporting clinicians to enter the right data through their workflows and data validation rules

- a lack of user manuals for entering and submitting VADC data.

To address this, the contractor made 5 recommendations to DH to improve data quality. This remediation work is ongoing.

The Royal Commission into Victoria's Mental Health System

The 2021 Royal Commission into Victoria's Mental Health System recommended that DH improves how it collects data about the mental health and wellbeing sectors. This includes:

- reviewing the data elements it collects from mental health service providers

- introducing a mental health and wellbeing record, a mental health information and data exchange to support data sharing between providers and DH, and a portal for clients to access.

DH received government funding in 2022–23 to implement these recommendations. DH told us that it plans to include the AOD sector in this project in 2026.

1.3 Timeline of events relevant to the VADC

Source: VAGO.

2. Planning the VADC

Conclusion

DH’s planning for the VADC has had ongoing impacts on data quality.

DH’s choice to use a standalone dataset specification for the VADC was in line with its strategic priorities. However, it did not adequately consult with the sector or vendors who would need to use it.

This meant that DH did not effectively manage the risk that service providers and vendors might not have the capability to meet the VADC's requirements.

Service providers’ abilities to meet the VADC's data collection requirements still vary significantly.

This chapter discusses:

2.1 Issues with DH’s planning for the VADC

DH's choice of data collection model

DH had its own reasons to choose a standalone dataset specification for the VADC.

However, it did not effectively assess or manage all the potential data quality risks associated with this.

DH did not fully engage with vendors when it was planning and implementing the VADC. This meant that it did not have timely access to their advice on service providers' CMSs and their likely compatibility with the dataset specification.

Service providers also warned DH they would need to overcome capacity and resource constraints to implement the VADC.

DH chose to use a standalone dataset specification because:

- it would provide flexibility for DH and eliminate the risk that it would need to support legacy software in the future

- it was consistent with data collection models that the hospital sector uses

- service providers would only need one CMS to be able to enter and submit data

- most costs would be service providers’ responsibility, not DH’s.

DH told us that other considerations also guided its choice.

For example, Victoria's 2015 Statewide Health ICT Strategic Framework and the 2013 Ministerial Review of Victorian Health Sector Information and Communication Technology supported allowing health providers to choose their own IT systems to meet their local needs.

DH's planning for the VADC

DH's planning for the VADC dataset specification was inadequate. This has led to ongoing data issues.

We used ADHA’s Standards Development Model to assess DH’s planning. The model describes good practices for developing health dataset specifications and the impact of poor practices.

These practices include:

- getting balanced input from stakeholders

- establishing a clear need for the specification, ensuring that the specification is consistent with other relevant standards.

Figure 2A shows our assessment of DH’s planning compared to the model’s good practice principles. Overall, DH’s planning was not adequate because it only fully met 5 of the 12 principles.

FIGURE 2A: Comparison of DH's planning with better practice principles

| Principle | Definition | VADC standard development |

|---|---|---|

|

Openness |

Standards developers actively seek diverse and explicit stakeholder engagement, input, monitoring and assessment. |

Partially met DH consulted with service providers through its project reference group, a survey and site visits. DH did not consult with service providers’ CMS vendors when it planned the dataset specification, even though the project reference group noted in July 2016 that it was important to engage with vendors early. |

|

Transparency |

Standards developers make information about their plans available to potential stakeholders, including non-experts. |

Partially met DH shared documentation with service providers and vendors, but this was highly technical and difficult for service providers to understand. |

|

Representation |

Standards developers get balanced participation during planning from stakeholders who will be significantly affected. |

Partially met DH consulted with service providers during the planning process but not with vendors who would need to work with the dataset specification. |

|

Impartiality |

Planning does not favour the interests of any particular party. |

Partially met DH led an unbiased process with the stakeholders it consulted. However, it was not possible to lead an impartial process when the range of stakeholders DH consulted was limited. |

|

Consensus |

Stakeholders generally agree to the published standard, without sustained opposition to substantial issues. |

Partially met DH's survey of service providers in September 2016 found significant barriers to implementing the dataset specification, particularly resource, budget and time constraints. Less than 10 per cent of providers reported that there were no barriers. This indicates concern in the sector about DH's proposed plans. It is not clear how DH addressed these concerns. DH conducted limited user testing before implementing the dataset specification. |

|

Market need and net benefit |

There is a clear market need and benefit to the Australian community. |

Met There was a clear need to replace the ADIS legacy data collection system. |

|

Timeliness |

Urgency should not override other principles. |

Not met DH focused on delivering the VADC ahead of addressing stakeholder concerns. Fifty-five per cent of service providers DH surveyed in September 2016 said they faced timeframe constraints in implementing the new dataset specification. DH's contractor noted that DH seemed to ignore their advice for implementing the VADC in stages with significant resources. |

|

Internationality |

International standards should be used in preference to national and regional standards. |

Met DH aligned the VADC to the Australian Government's Alcohol and Other Drug Treatment Services National Minimum Data Set, which draws on international standards. |

|

Compliance |

Standards must comply with relevant laws and regulations. |

Met There is no evidence that DH did not comply with relevant laws and regulations. |

|

Coherence |

Standards must be integrated with other relevant standards. |

Met DH generally based the VADC's data elements on standard health definitions. |

|

Availability |

Standards should be freely available. |

Met The VADC dataset specification and information on submission requirements are freely available online. |

|

Support |

Standards developers should consider producing supplementary material because the specification may not be enough for all users to understand. |

Partially met DH released instructions for preparing and submitting VADC data, but this was also written in technical language. It did not produce a plain language version of the dataset specification. |

The Alcohol and Other Drug Treatment Services National Minimum Data Set is a set of standard data elements that the Australian Government and state and territory health authorities, including DH, have agreed to collect. The Australian Institute of Health and Welfare collects and validates this data.

Source: VAGO, based on ADHA’s Standards Development Model.

2.2 DH’s stakeholder engagement and risk management

The most important stakeholders during VADC planning were service providers and vendors.

Service providers collect and submit the data for the VADC, so they needed to understand the new dataset specification’s requirements.

Vendors needed to make their CMSs comply with the dataset specification’s requirements. They would have been able to advise DH of any technical limitations to collecting or submitting data.

If DH developed the dataset specification in consultation with these stakeholders, the sector would have been more likely to:

- understand it

- comply with it

- give DH high-quality data.

However, DH did not adequately consider these stakeholders' advice when it made planning decisions and risk assessments.

Stakeholder engagement

DH explained to the AOD sector why it needed to replace ADIS. However, it did not consult with it enough on the best option for replacing it.

DH started planning the VADC project in 2016. It formed a project reference group with representatives from DH, the Victorian Alcohol and Drug Association and service providers. The group’s role was to provide expert advice and recommendations about the project for DH to consider.

Service providers

During planning, DH consulted with service providers through site visits and a September 2016 survey about their data collection capacity and capability.

Sixty-three per cent of service providers responded to DH’s survey. Of those respondents, 70 per cent said they would need to overcome resource, capacity or budget constraints to implement the VADC.

It is not clear how these results and other feedback informed DH’s planning decisions.

Vendors

DH did not run an information session for vendors until June 2018.

This was 4 months before the deadline for service providers to start reporting VADC data through their CMSs.

DH did not consult with vendors on the dataset specification’s design. This meant that vendors needed to address errors they found in the dataset specification while implementing it.

Risk management

2015 project brief

DH’s risk assessment in its 2015 project brief underestimated the consequences of service providers’ different data and IT capacities and capabilities.

DH noted there was a high risk that submitted data would not meet its requirements and that this might be caused by unsuitable CMSs.

However, it only gave a medium risk rating to the IT capability and capacity risks that directly contributed to this.

DH's mitigation strategies relied on data validation rules and communicating with service providers. These strategies did not prevent data quality issues though.

2017 risk register

Most of the risks (7 of 12) that DH listed in its 2017 risk register for the VADC project related to service providers’ or vendors’ capacities to implement it.

As with the 2015 project brief, DH anticipated it was highly likely that providers would submit poor-quality data due to inadequate CMSs, but it rated this risk as medium.

DH also rated the risk that service providers might be unable to get suitable CMSs or vendors to implement the data collection requirements within an acceptable cost as medium.

This is despite feedback from service providers and vendors about the high likelihood of these risks materialising.

These risks have all materialised and have become root causes of the data quality problems that continue to affect the VADC.

2.3 Issues with the VADC’s requirements

There are several ongoing issues with the VADC dataset specification and data collection requirements. In particular:

- CMSs vary in their support for data quality

- the dataset specification is too complex for service providers

- service providers need to incur substantial costs to meet the VADC’s requirements

- service providers submit data that does not reflect the care they give to clients.

CMSs vary in their support for data quality

DH told us that there are at least 20 different CMSs that service providers use to collect VADC data throughout the state.

However, there may be more in use because providers do not need to tell DH when they start using a new system.

This leads to data quality issues because:

- CMSs validate data differently

- some CMSs do not produce reliable reports

- vendors’ support for service providers varies.

CMSs validate data differently

CMSs validate data differently. For example, one audited provider’s CMS prompts clinicians in plain language if they are missing data. It will not allow them to progress with the submission until they fix this.

Another provider’s CMS does not always prompt clinicians if they are missing data or accidently create an error.

If their CMS does not validate data, clinicians do not know if they have made an error until DH reviews their submissions. This happens once a month.

Clinicians then need to fix any errors. This means they may need to adjust data weeks after the client contact occurred.

Vendors’ business models can affect how their CMSs validate data.

One vendor we consulted accommodates minimal data validation because their CMS is also used for non-VADC clients and this functionality is only helpful for the VADC.

Some CMSs do not produce reliable reports

We found that 2 of the 4 audited service providers cannot check if their submissions accurately represent their service activities.

This is because their CMSs do not produce reliable activity reports to compare with DH's reports, such as its service event statements or performance reports.

We also found that the other 2 audited providers’ internal reports did not match their performance reports from DH. This could be for reasons such as:

- data entry errors

- errors in how the CMS vendor has interpreted the dataset specification or requirements

- errors in how DH tells providers to enter data to make it contribute to their targets.

Vendors’ support for service providers varies

While the service providers we audited usually submit their data on time, problems with CMSs can cause delays.

For example, a service provider might not be able to clear an error until its vendor makes a change to its CMS.

DH noted vendors as the cause of data submission delays in 3 of the 10 meetings it had to monitor submission compliance in 2021.

Service providers told us that vendors are reluctant to change their CMSs beyond the minimum requirements set by DH. Service providers can also be hesitant to pay for changes if they lack confidence that they will improve data.

Figure 2B gives an example of how the limitations of a service provider's CMS have impacted their data quality.

FIGURE 2B: Case study: issues with how a service provider's CMS supports data quality

This service provider has worked with its vendor to develop workarounds to VADC errors, which sometimes involve changing data about clients. The provider leaves a note in the record to inform clinicians about anything it has changed.

The provider has sometimes needed to delay its data submissions while the vendor adjusts the CMS to resolve errors.

The provider cannot extract useable service activity reports from its CMS to check if its data is accurate. Its CMS also has minimal data validation controls. This means that clinicians make errors without realising and need to fix them retrospectively.

Source: VAGO.

The VADC dataset specification is too complex for service providers

All audited service providers and 3 of the 4 vendors we spoke to emphasised the complexity of the VADC dataset specification and its requirements. These 3 vendors told us that the VADC is the most complex dataset specification they work with. The fourth vendor said that the dataset specification provides a good level of detail for technical users.

Three of the 4 vendors we consulted support more than one provider. These vendors said that some service providers rely on their help to interpret the dataset specification.

The dataset specification is 250 pages and written in technical language that is difficult for non-data specialists to understand. This includes many AOD clinicians, who enter data.

Data elements

The current VADC dataset specification has 87 data elements.

In comparison, the Alcohol and Other Drug Treatment Services National Minimum Data Set has 35 elements. This is the minimum amount of data that states need to collect.

This means that the VADC collects much more data than the Australian Government requires, which increases the burden of collecting data on service providers.

DH's internal review also found that DH has not adequately explained to service providers why it needs to collect the data it obtains.

Errors

The VADC dataset specification has 139 data errors and warnings that service providers may need to fix when they submit their data.

For example, errors and warnings about:

- missing data

- incorrect combinations

- data outside the required range.

To resolve an error, service providers need to fix it in their CMS and resubmit the data to check it, which can be time-consuming.

If one service provider leads a consortia of service providers, then the lead provider sometimes manages data submissions and errors for all of the providers in the consortia.

In May 2022, service providers generated 5,605 errors, with an average of 63 errors per provider.

Service providers need to incur substantial costs to meet VADC requirements

There are 89 service providers in the AOD sector. These providers range significantly in size. DH found in its December 2020 review that 44 per cent of providers combined delivered just 5 per cent of all AOD treatment services.

Small service providers do not have the same resources and capabilities to manage their CMSs and data as larger service providers.

In the same review, DH found that only one of the 18 service providers it assessed had a high IT capability and only 3 had a high data capability.

The review also found that one third of service providers it assessed had a low IT capability or data capability.

In September 2021, DH's contractor found that:

- 50 per cent of data submissions have a large volume of quality issues, which service providers have had to resolve

- an additional 30 per cent of submissions have data quality issues that service providers are not resolving.

This suggests that most service providers do not have the capability or capacity to address their data quality issues.

Providers are also incurring significant costs to meet the requirements of the VADC.

Financial resources

When DH asked for quotes to develop the VADC dataset specification, they specified that the dataset should minimise burden on service providers. This has not occurred.

Service providers use their service agreement funding to meet the VADC’s requirements.

The 4 audited providers estimate that they have collectively spent at least $2 million on VADC compliance since DH introduced it.

This is well over the $280,000 in grants they received from DH for this purpose. Providers could also use some of these grants for IT equipment to support telehealth and remote service delivery. In September 2021, DH's contractor estimated that the AOD sector spends at least $2 million a year to fix data quality issues, including $1.8 million by service providers.

This means that service providers have less funds to spend to deliver their services.

DH expects that service providers will spend some of its funding on administrative costs. It does not have guidelines about how much is appropriate for this purpose though.

Human resources

Service providers need staff with highly specialised IT skills to help them submit high quality data. DH does not require providers to have staff with these skills though.

Three audited service providers have staff members assigned to spend a substantial amount of time meeting VADC requirements. This includes:

- completing data submissions

- helping clinicians understand the data they need to enter.

Two audited service providers have hired experienced data analysts to help them improve their data quality. These providers still have difficulty ensuring that their performance reports reflect the services they have delivered. Figure 2C gives an example of this.

The other 2 service providers rely on staff who are not data specialists to fix errors and meet their submission requirements.

FIGURE 2C: Case study: hiring specialist staff to improve data quality

This data analyst is responsible for submitting error-free datasets and has also developed internal reports to investigate data quality issues. This involves directly downloading data from the CMS, matching it to DTAU rules and then comparing it to the DTAU allocation listed in the provider’s service event statements.

Through these reports, the provider found that the expected DTAU for its therapeutic day rehabilitation program was 24 per cent lower than its internal report indicated.

The provider investigated this issue and found that its CMS did not differentiate between clients who should be receiving DTAU and those who should not.

The provider told us that it developed a manual workaround in its CMS to fix the problem. The provider and DH consider this workaround acceptable. The provider has not pursued changing its CMS because this would require more resources.

Source: VAGO.

Service providers submit data that does not reflect the care they give to clients

Three audited service providers told us that they sometimes need to change the data they submit to the VADC to resolve errors.

When this occurs, their VADC data does not accurately reflect the services they have delivered.

For example, one provider sometimes backdates the last date a client used a drug. This is because the VADC dataset specification does not allow clinicians to enter a date that is after the date a client finished their treatment.

The provider does this if a client briefly contacts a clinician after their treatment has finished and their clinician considers this contact to be part of the previous course of treatment. Another provider told us that it manages this issue by submitting blank data if the correct date would trigger an error.

Two audited providers also told us that they cannot always enter drugs of concern accurately in their CMS.

There is also evidence that some service providers alter their data to get DTAU funding that may not otherwise be available to them under current funding rules. This is because some service providers believe that the rules do not account for the work they do.

3. Improving data quality

Conclusion

DH needs to improve the VADC’s data quality so it has complete and accurate information about AOD services across the state.

DH makes limited use of VADC data to monitor AOD services and DFFH uses it to broadly monitor individual providers' performance.

DH understands the data quality issues that affect the VADC and is working to address them. This will likely achieve some improvement in data quality.

However, DH’s work will likely not address the complexity of the dataset specification, service providers’ different data capabilities and variation within their CMSs. This means that the data quality issues will likely continue.

This chapter discusses:

3.1 How DH and DFFH use VADC data

DH planned to use VADC data to:

- monitor AOD treatment services and demand across the state

- monitor service providers’ performance

- plan AOD services.

However, data quality issues limit the data’s reliability for these purposes.

Monitoring AOD services and demand

DH uses statistics based on VADC data to do limited monitoring of AOD services across the state.

However, it does not use VADC data for demand modelling.

It has also not used VADC data to help it continue developing AOD funding models or develop performance indicators based on client outcomes, which it intended to do.

Monitoring service providers’ performance

DFFH uses data from the VADC to monitor providers alongside data from other sources.

DFFH told us that the VADC’s data quality and timeliness needs to improve before it can rely on it to adjust service providers’ funding and targets in response to underperformance.

DFFH told us that it did not manage any service providers’ performance specifically due to them underperforming in their AOD services during the period we examined from July 2020 to December 2021.

The COVID-19 pandemic suspended normal performance management during this time.

Planning AOD services

DH currently gives catchment planners raw data from the VADC.

This means that planners’ data capabilities determine how they can use the data.

DH is developing the following reports to improve how it gives data to the sector:

- reports for service providers that benchmark their performance against other providers

- reports for service providers and catchment planners about client demographics and how clients use services.

DH is also planning to develop an interactive dashboard for catchment planners using VADC data. It has not started this work yet.

3.2 Issues with the VADC’s data quality

We assessed the current state of VADC against the ADHA’s high-quality data characteristics and DPC’s data quality dimensions. Figure 3A combines these quality elements and assesses:

- the gap between the current and ideal state of the VADC

- if DH’s improvement program will sufficiently address this gap.

FIGURE 3A: Our assessment of the VADC against ADHA’s data quality characteristics and DPC's data quality dimensions

| ADHA data quality characteristic | Related DPC data quality dimension | Definition | Addressed |

|---|---|---|---|

|

Clear, well-defined scope |

Fit for purpose |

The dataset addresses a clear problem. |

Not addressed DH has not explained the purpose of all the VADC’s data elements to service providers. |

|

Collection |

Collection |

The data collection method is known and consistent. |

Partially addressed CMSs vary in how well they support data quality. DH is not clear about the specific improvements it seeks to CMSs to improve support for data quality. DH’s training and online materials have limited value due to service providers’ varied data capabilities, different CMS platforms and staff turnover. |

|

Plain language |

Collection |

Requirements are clear enough that a technically competent user can understand it or a less competent user can use it with reasonable effort. |

Partially addressed The dataset specification is written in technical language that is difficult for non-technical users to understand. DH added code descriptions to service event statements, released an information guide for the statements and developed plain language versions of funding rules. This has made the service event statement easier to use but is unlikely to be enough detail for users with low data literacy. DH does not plan to make a plain language version of the dataset specification. Service providers use different CMSs, which limits DH’s ability to create data entry guides. Providers can make their own guides that are specific to their CMS. |

|

Accuracy and completeness |

Accuracy and completeness |

The data is accurate, valid and complete to a certain standard. |

Partially addressed DH has found that VADC data does not accurately represent service providers’ activities because clinicians sometimes enter inaccurate data. Some providers cannot compare their internal records against their VADC submissions to ensure they are complete. DH does not plan to require CMSs to produce reports for this purpose. DH has not started analysing the effectiveness of its work to improve data quality, which it planned to do. |

|

Representative |

Representative |

The dataset represents the conditions or scenario that it refers to. |

Partially addressed Service providers sometimes enter data that does not reflect the services they give to clients. DH’s annual change process adjusts data entry requirements based on submissions from the sector or DH. DH does not plan to review the dataset specification to identify requirements that are inconsistent with common AOD practices or prevent providers from entering accurate data. |

|

Quality control |

Consistency |

There should be quality control in the data collection method to avoid errors. |

Partially addressed The VADC does have data quality controls, which we discuss in Section 3.3. However, DH’s internal review found that the VADC’s widespread quality issues are largely due to clinicians entering incorrect data. Service providers choose their own CMS, regardless of how well it supports data quality. CMSs’ data validation controls vary. Not all CMSs help prevent clinicians from making errors. DH has not defined a minimum level of data validation controls that CMSs should have. DH plans to give in-depth one-on-one support to selected providers to help them address complex issues. |

|

Maintenance and support |

Collection |

The data owner should provide further direction and guidance or provide a platform for ongoing maintenance. |

Partially addressed Service providers need to purchase and maintain their own CMS to collect data for the VADC. DH’s training and online materials have limited value due to service providers’ staff turnover, different data capabilities and different CMSs. Providers can make their own guides for entering and submitting data that are specific to their CMS. |

|

Timeliness and currency |

Timeliness |

The timeliness and currency of the data are appropriate for its use. |

Addressed Service providers generally submit data on time. The VADC’s data quality statement clearly outlines timeliness constraints. |

Source: VAGO, based on ADHA’s Standards Development Model and DPC’s Data Quality Guideline.

3.3 DH’s work to improve data quality

DH is improving how it analyses and manages data quality issues.

While it is working with service providers and vendors to address known issues, its remedial work will likely not address all of them sufficiently.

How DH manages data quality

Data quality statement

DPC’s Data Quality Guideline requires government agencies to have a data quality statement and data quality management plan for all of their critical datasets.

In March 2022, DH published its first data quality statement for the VADC as part of the 2022–23 dataset specification.

DH has not published a data quality management plan though. This means it does not have a documented plan that outlines known data quality issues and how it plans to address them.

Data quality controls

DH uses its own data validation controls to check that service providers’ data meets certain formats and other rules, such as permitted data combinations. DH sends providers a validation report for each data submission, which shows any errors and warnings in the data.

However, this does not stop service providers from submitting data that passes DH’s validation checks but does not reflect the services they actually delivered, which we discuss in Section 2.3.

To address this, DH occasionally analyses the VADC to detect unusual data. For example, it does this:

- if the Australian Institute of Health and Welfare raises an issue about the data DH has submitted for national reporting

- for training sessions as part of its improvement program.

DH also monitors if service providers make submissions on time and follows up with providers who are late.

Annual changes to the VADC dataset specification

DH has an annual process for changing the VADC dataset specification, which will likely improve the dataset over time.

During this process, DH considers proposals and feedback from:

- DH, which can be on behalf of the sector

- service providers

- vendors.

DH’s change control group and change management group review these proposals. Each group has representatives from the sector.

DH is limiting dataset specification changes to essential changes, such as fixing incorrect business rules, until data quality stabilises.

While this process helps DH maintain and improve data quality, it still relies on service providers to collect accurate data through their CMSs.

Service providers’ data quality controls

DH requires service providers to submit complete and accurate data to the VADC as part of their funding agreements.

DH recommends that service providers:

- have a consistent data processing approach

- review errors or missing data weekly

- run regular training and familiarise staff with DH’s resources

- use resources DH provides to support data quality, such as validation reports and service event statements.

DH does not routinely check if service providers comply with these controls.

We found that the 4 audited service providers generally comply with them.

However, all of these providers usually review errors monthly rather than weekly to align with DH’s submission timelines.

One provider reviews DH’s service event statements approximately every 3 months due to a lack of time.

Service providers’ confidence in responding to errors and how much they understand DH’s service event statements varies.

DH does not require CMSs to validate data at the point of entry or produce activity reports for service providers to check if the data they submit is complete.

DH’s improvement program

DH’s December 2020 internal review into the VADC’s data quality recommended that it:

1. implements a continual improvement program, including:

- training programs for service providers and vendors

- improvements to CMS platforms

- plain language instructions on the VADC's requirements

2. provides clearer and more frequent service event statements

3. reports on VADC data more frequently and consistently to service providers

4. reviews compliance reports on data submissions

5. changes the VADC dataset specification less frequently.

DH hired the contractor that developed the VADC dataset specification to deliver the first recommendation, the continual improvement program. This work is ongoing.

DH is working on the third recommendation and has finished the remaining 3 recommendations.

In February 2022, DH further expanded its continual improvement program to include:

- expanding its training for service providers

- producing online learning materials

- providing detailed support to providers with complex issues

- holding quarterly VADC forums for service providers

- improving how it communicates with service providers

- advising vendors on how to improve their CMSs, helping service providers work with their vendors, and encouraging user group meetings between providers and vendors.

DH’s contractor will also deliver this work.

Impact of DH’s improvement program

DH’s actions are positive and will help service providers improve the accuracy of their submissions.

DH’s work will likely improve data quality to an extent over time. There is already some evidence that this is happening. For example:

- the number of errors in service providers’ submissions remained stable in 2020–21 and trended down from July 2021 to May 2022. DH expects the number of errors to increase when it changes the dataset specification every July

- DFFH told us that it has seen some improvements in data quality and timeliness since December 2021.

However, this work will likely not address all the root causes of the VADC's data quality issues because:

- the VADC will still be a complex dataset compared to the Australian Government’s Alcohol and Other Drug Treatment Services National Minimum Data Set

- DH’s technical documents and supporting guides are still difficult for clinicians who enter the data to understand

- service providers continue to choose their own CMSs regardless of how well they support data quality

- there will still be service providers with low data capabilities who require extensive and ongoing support to improve the quality of their data.

Staff turnover at service providers also means that the training DH is planning might not sustain data quality improvements unless it makes broader changes to the dataset specification and data collection model.

Appendix A. Submissions and comments

Click the link below to download a PDF copy of Appendix A. Submissions and comments.

Appendix B. Acronyms, abbreviations and glossary

Click the link below to download a PDF copy of Appendix B. Acronyms, abbreviations and glossary.

Click here to download Appendix B. Acronyms, abbreviations and glossary

Appendix C. Scope of this audit

Click the link below to download a PDF copy of Appendix C. Scope of this audit.

Appendix D. Data elements in the VADC

Click the link below to download a PDF copy of Appendix D. Data elements in the VADC.

Click here to download Appendix D. Data elements in the VADC