Clinical Governance: Health Services

Snapshot

Do health services' systems and processes assure quality and safe care?

Why this audit is important

The safety and quality of Victorian health services is vital to all patients and their families and carers. It is therefore crucial that health services have rigorous clinical governance systems and cultures to deliver safe, person-centred and effective care.

In 2016, the Victorian Government commissioned an independent review to assess how the then Department of Health and Human Services was overseeing the quality and safety of patient care across the state. This review, known as 'Targeting Zero', made many recommendations to improve the health sector and requested us to audit progress in addressing them.

Who we examined

Ballarat Health Services, Djerriwarrh Health Services, Melbourne Health and Peninsula Health as a representative selection of Victorian public health services.

What we examined

If the four health services:

- set clear clinical governance expectations

- have established a culture of patient safety

- understand and respond to quality and safety risks at the board and executive levels.

What we concluded

Health services' systems and processes do not consistently ensure they are providing high quality and safe patient care.

None of the audited health services investigate all serious incidents promptly, and only one acts on recommendations in a timely way to prevent safety risks recurring.

Over four years since Targeting Zero, some health services are still not fully 'living' their local clinical governance frameworks. By not prioritising and engaging in this work, they are not doing enough to improve patient safety.

Differences in progress between the four health services relate to the differences in their size and consequently, their resources and the maturity of their systems to deliver quality and safe care.

What we recommended

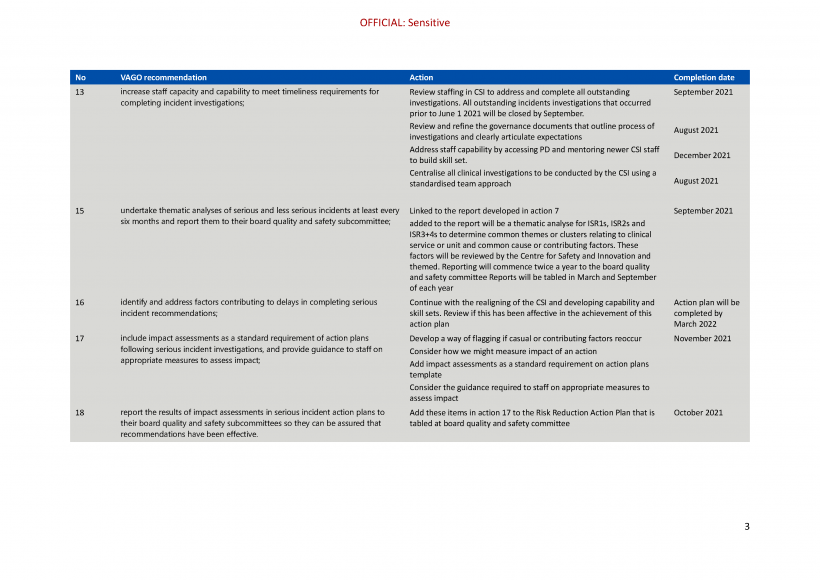

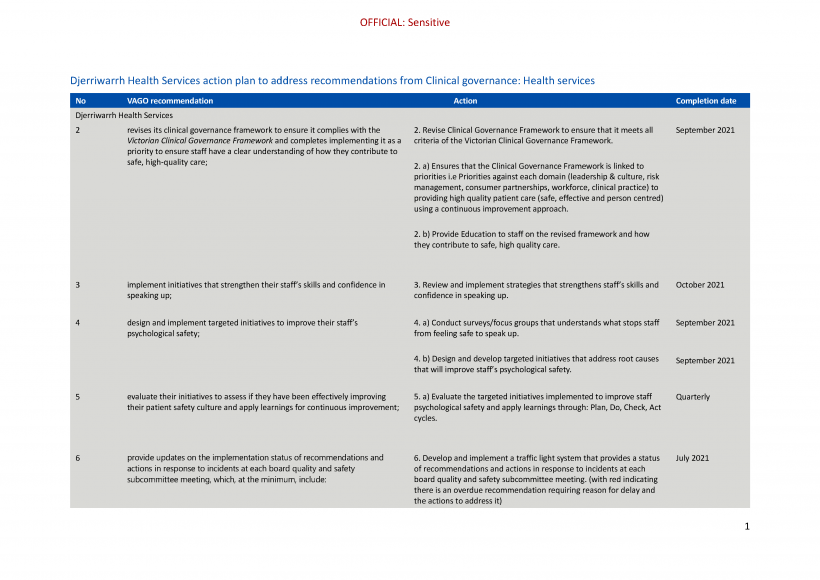

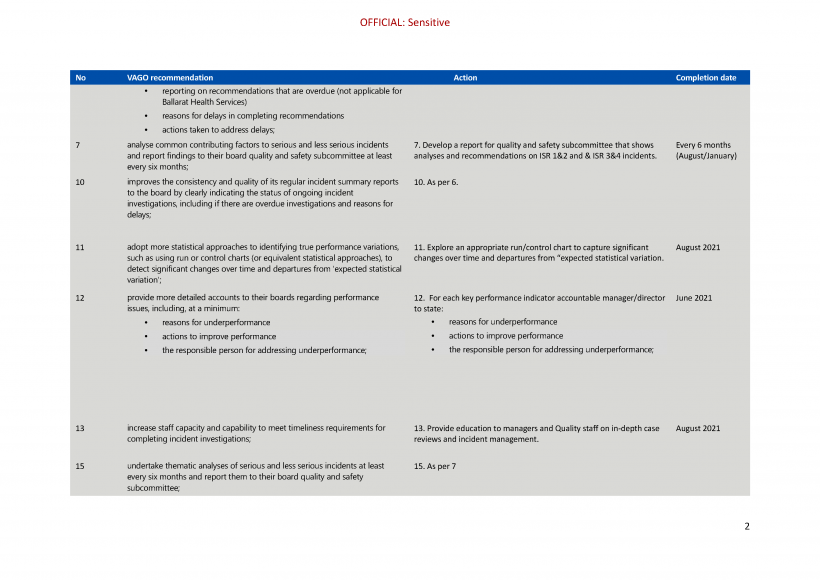

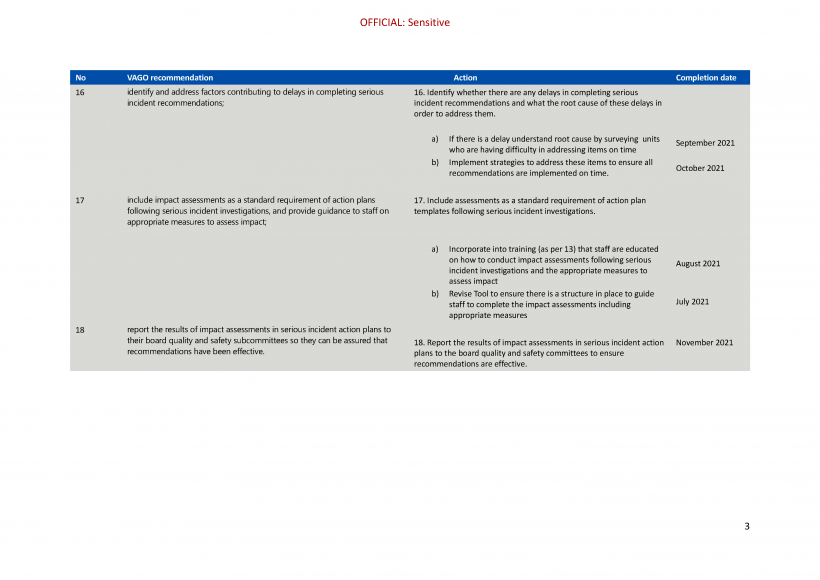

We made 18 recommendations in total across Ballarat Health Services, Djerriwarrh Health Services, Melbourne Health and Peninsula Health. These aim to ensure health services implement their local clinical governance frameworks, improve their patient safety culture and enhance the quality of safety and quality performance reporting to health service boards. The four audited health services have accepted all our recommendations.

Video presentation

Key facts

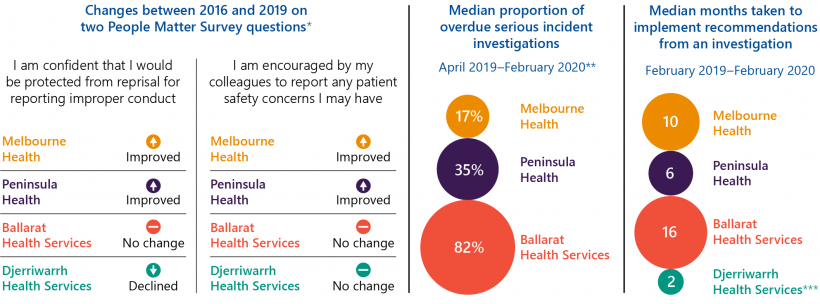

Note: * The People Matter Survey is an annual public sector survey. ** We excluded Djerriwarrh Health Services as there were only five serious incidents during our sample period. *** The nature of recommended actions Djerriwarrh Health Services had to undertake were relatively simple.

What we found and recommend

We consulted with the audited agencies and considered their views when reaching our conclusions. The agencies’ full responses are in Appendix A.

Establishing and embedding clinical governance frameworks

Health services are a range of organisations that provide healthcare, including public hospitals, as defined by the Health Services Act 1988.

The Victorian Clinical Governance Framework (VCGF) sets the Victorian Government's expectations for the systems and processes that public health services need to deliver safe and high-quality healthcare. The VCGF requires each health service to establish its own clinical governance framework to support its staff to work towards common goals. While accounting for local needs, these individual frameworks must comply with the VCGF.

Melbourne Health (MH) and Peninsula Health (PH) have met this requirement. Both health services have developed clinical governance frameworks that comply with the VCGF and have embedded them through initiatives to translate their expectations into practice. Through these efforts, MH and PH are reinforcing a consistent message to their staff and keeping them focused on achieving high-quality care.

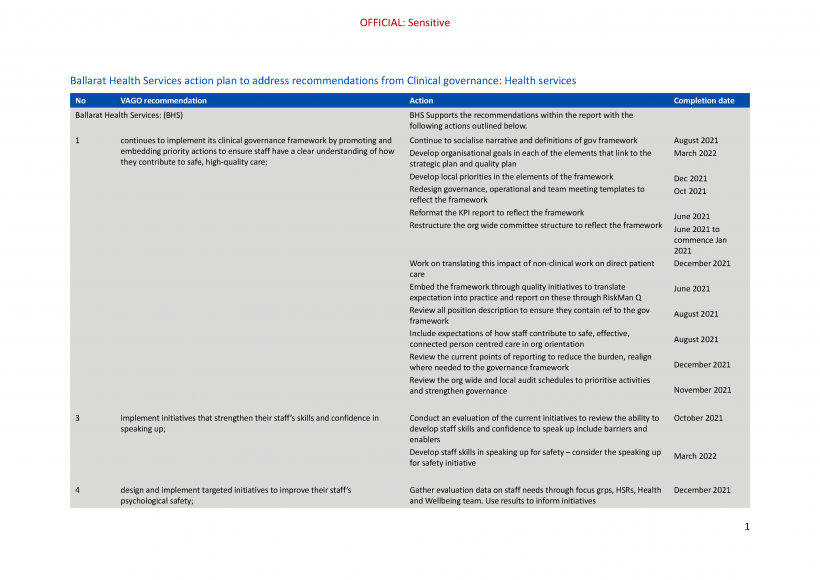

In contrast, while Ballarat Health Services (BHS) started developing its framework in October 2019, it only completed it in January 2021—three and a half years later than expected. It has only recently started promoting and implementing it.

Djerriwarrh Health Services' (DjHS) framework does not fully comply with the VCGF because it does not identify underlying priorities to help it achieve its framework goals, such as activities to ensure a visible and engaged executive leadership. To date, DjHS has not implemented its framework; it has not actively promoted or embedded it in its operations to drive quality improvement activities.

As a result, unlike MH and PH staff, staff we interviewed at BHS and DjHS did not have a good understanding of their organisation’s clinical governance framework or the priorities and expectations it contains.

While the Department of Health (DH) requires health services to comply with the VCGF as part of its Policy and Funding Guidelines, it does not assess this requirement.

Recommendations about establishing and embedding clinical governance frameworks

| We recommend that: | Response | |

|---|---|---|

| Ballarat Health Services | 1. continues to implement its clinical governance framework by promoting and embedding priority actions to ensure staff have a clear understanding of how they contribute to safe, high-quality care (see Sections 2.1 and 2.2) | Accepted by: Ballarat Health Services |

| Djerriwarrh Health Services | 2. revises its clinical governance framework to ensure it complies with the Victorian Clinical Governance Framework and completes implementing it as a priority to ensure staff have a clear understanding of how they contribute to safe, high-quality care (see Sections 2.1 and 2.2). | Accepted by: Djerriwarrh Health Services |

Establishing and supporting a positive patient safety culture

Health services with a positive patient safety culture are more likely to detect clinical risks early, which allows them to act and prevent avoidable harm to patients. A health service has a positive patient safety culture when staff:

- are safe from bullying and harassment and do not fear reprisal or retribution when they speak up about personal or patient safety concerns

- are confident to speak up to their peers and managers about personal or patient safety concerns and are confident that management will act

- actively engage in activities that maintain or increase their focus on safe and high quality care.

Only MH and PH have established a positive patient safety culture.

People Matter Survey

The PMS measures different aspects of workplace culture, such as job satisfaction and career development, across the Victorian public sector. It has a specific section on 'patient safety climate' for health services only.

The Victorian Public Sector Commission’s annual People Matter Survey (PMS) captures staff perceptions of the patient safety culture at their workplace and how safe they feel to speak up. At MH and PH, staff perceptions have improved since 2016, which was when the Victorian Government released the Targeting Zero: Supporting the Victorian hospital system to eliminate avoidable harm and strengthen quality of care report (Targeting Zero). This means that staff at these health services are now more likely to report incidents, understand the importance of quality improvement activities and participate in them to reduce the risk of patient harm.

By contrast, BHS and DjHS have not improved their relevant PMS results since 2016. DjHS’s results for bullying and how safe staff feel to speak up have deteriorated.

All four health services, especially DjHS, scored low results in:

- staff feeling safe from reprisal if they report improper conduct

- staff confidence in the integrity of investigations into safety issues.

This suggests that the audited health services need to be more transparent about their investigation processes and address staff concerns about reprisal to strengthen their patient safety cultures.

Culture initiatives

MH and PH have a comprehensive suite of initiatives to build and maintain a positive patient safety culture. They directly support their staff to speak up and provide multiple avenues for them to do so. For instance, MH trains its staff to use a communication tool that uses a stepped approach to raising and escalating concerns with colleagues. MH and PH also promote and reward desired values and behaviours, and MH has evaluated its initiatives to identify barriers and enablers to patient safety.

BHS and DjHS have initiatives to increase staff awareness on patient safety and set expected values and behaviours. However, they lack initiatives to develop their staff’s skills and confidence to speak up. This is concerning especially for DjHS, whose PMS results indicate that its staff do not feel safe to do so.

Recommendations about establishing and supporting a positive patient safety culture

| We recommend that: | Response | |

|---|---|---|

| Ballarat Health Services and Djerriwarrh Health Services | 3. implement initiatives that strengthen their staff’s skills and confidence in speaking up (see Sections 3.2 and 3.3) | Accepted by: Ballarat Health Services and Djerriwarrh Health Services |

| 4. design and implement targeted initiatives to improve their staff’s psychological safety (see Sections 3.2 and 3.3) | Accepted by: Ballarat Health Services and Djerriwarrh Health Services | |

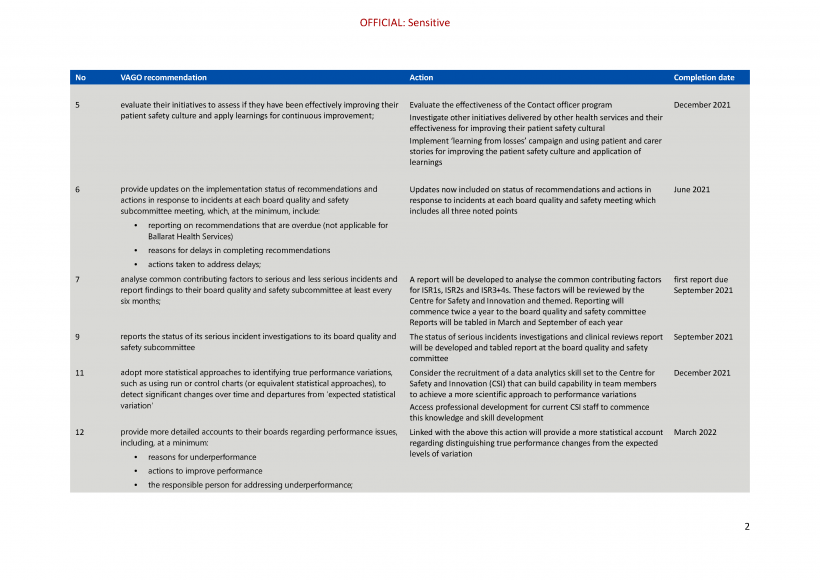

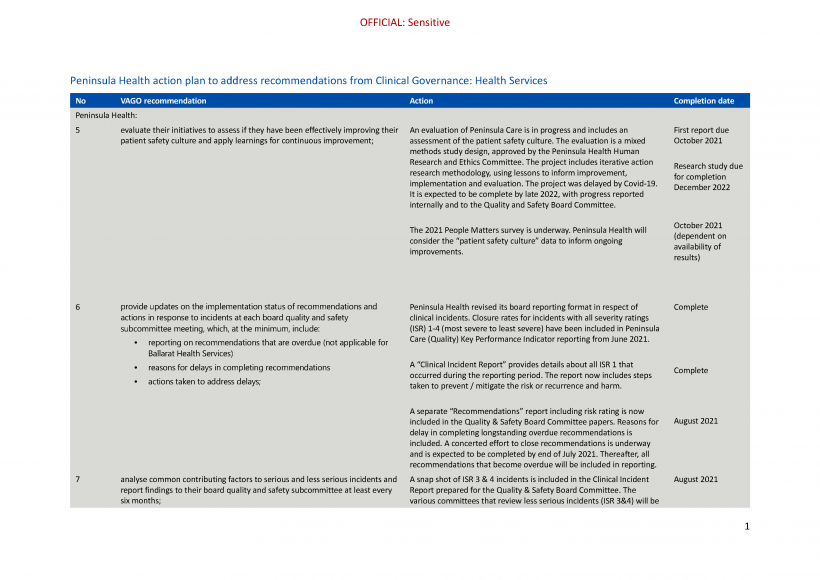

| Peninsula Health, Ballarat Health Services and Djerriwarrh Health Services | 5. evaluate their initiatives to assess if they have been effectively improving their patient safety culture and apply learnings for continuous improvement (see Section 3.3). | Accepted by: Peninsula Health, Ballarat Health Services and Djerriwarrh Health Services |

A health service board consists of individual directors that the Minister for Health appoints. Each board is responsible for the performance of its health service and is accountable to the Minister for Health. Each board also establishes a quality and safety subcommittee, which focuses on overseeing quality and safety risks and performance.

Clinical incidents are classified using an incident severity rating (ISR). This rating scale ranges from ISR 1, which is death or severe harm, to ISR 4, which is no harm or a near miss.

In this report, we use the term serious incidents to refer mainly to ISR 1 and ISR 2 incidents, while less serious incidents refer mainly to ISR 3 and ISR 4 incidents.

Identifying and responding to quality and safety risks

Gaps in how health service boards monitor quality and safety

Health services identify quality and safety risks by monitoring clinical incidents and their quality and safety performance indicators. A health service should prepare regular reports for its board so the board can assure DH, the Minister for Health and its local community that it is providing high-quality and safe care.

The boards at all four audited health services receive regular reports on incidents and quality and safety performance indicators. However, there are gaps in these reports that limit each board’s ability to assure that their health service is promptly identifying and addressing quality and safety risks and areas of underperformance. Specifically:

|

Reports provided by the relevant health service/s to the … |

do not provide … |

|

MH board |

detailed updates on the implementation status of recommendations and actions. |

|

PH board |

a comprehensive account of overdue serious incident investigations and recommendations because they:

|

|

BHS board |

the status of serious incident investigations. |

|

consistent and clear information on reasons for delays in implementing recommendations, or what actions executives are taking to address these delays. |

|

|

DjHS board |

consistent and clear information on the status of serious incidents. |

|

the implementation status of recommendations. |

|

|

PH, BHS and DjHS boards |

regular analyses on common contributing factors to serious incidents. |

|

MH, PH, BHS and DjHS boards |

regular analyses on common contributing factors to less serious incidents. |

Recommendations about gaps in how health service boards monitor quality and safety

| We recommend that: | Response | |

|---|---|---|

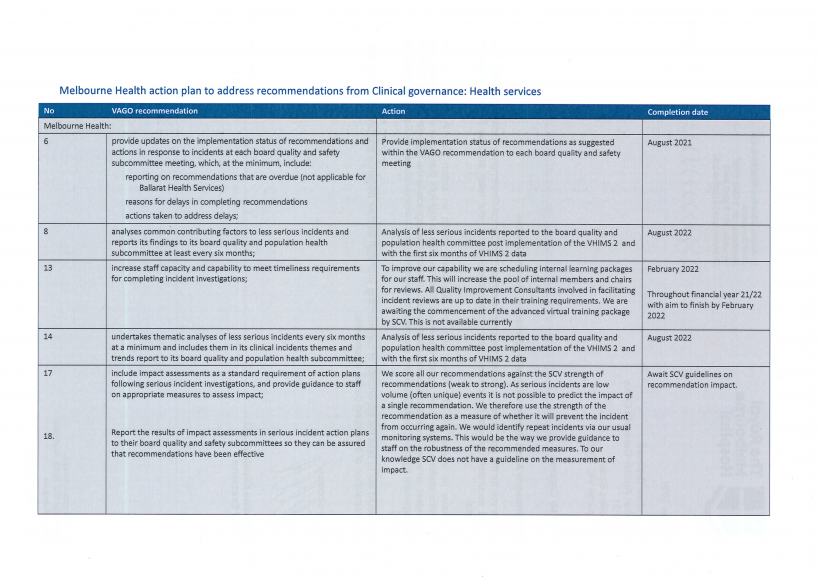

| Melbourne Health, Peninsula Health, Ballarat Health Services and Djerriwarrh Health Services | 6. provide updates on the implementation status of recommendations and actions in response to incidents at each board quality and safety subcommittee meeting, which at the minimum, include:

|

Accepted by: Melbourne Health, Peninsula Health, Ballarat Health Services and Djerriwarrh Health Services |

| Peninsula Health, Ballarat Health Services and Djerriwarrh Health Services | 7. analyse common contributing factors to serious and less serious incidents and report findings to their board quality and safety subcommittee at least every six months (see Sections 4.2 and 4.3) | Accepted by: Peninsula Health, Ballarat Health Services and Djerriwarrh Health Services |

| Melbourne Health | 8. analyses common contributing factors to less serious incidents and reports its findings to its board quality and population health subcommittee at least every six months (see Sections 4.2 and 4.3) | Accepted by: Melbourne Health |

| Ballarat Health Services | 9. reports the status of its serious incident investigations to its board quality and safety subcommittee (see Section 4.2) | Accepted by: Ballarat Health Services |

| Djerriwarrh Health Services | 10. improves the consistency and quality of its regular incident summary reports to the board by clearly indicating the status of ongoing incident investigations, including if there are overdue investigations and reasons for delays (see Section 4.2). | Accepted by: Djerriwarrh Health Services |

Analysing and responding to performance indicators

To comprehensively oversee its quality and safety performance, a health service board must:

- monitor sufficient and relevant performance indicators

- understand when results represent a genuine change in performance

- know that its executives are acting when performance does not meet targets.

A SOP is an annual accountability agreement between a health service and the Minister for Health. It includes indicators and performance targets that cover service quality, accessibility and financial viability.

In this audit, we focused on indicators specific to service quality and accessibility and excluded the ones relating to financial viability.

Statement of priorities

At a minimum, health services should monitor their quality and safety performance by tracking it against the mandatory key performance indicators (KPIs) in their statement of priorities (SOP) to have a sufficient view of safety and quality. Beyond their SOP, health services should also monitor KPIs that are relevant to their clinical governance framework goals to assess if they are achieving them.

Of the four audited health services, only PH monitors all of its SOP quality and safety indicators in its routine internal board reporting. MH monitors most of its relevant SOP indicators and BHS and DjHS have significant gaps. Specifically:

|

Internal quality and safety KPI reports to the board at … |

do not include … |

that relate to… |

|

MH (bimonthly) |

3 per cent of its SOP indicators (one out of 31 indicators) |

patients waiting for elective surgery for longer than clinically recommended. However, it includes this metric in quarterly reports. |

|

BHS (bimonthly) |

81 per cent of its SOP indicators (26 out of 32 indicators) |

mainly adult mental health services, maternity services, patient experience and access to emergency and elective surgery. |

|

DjHS (monthly) |

91 per cent of its SOP indicators (10 out of 11 indicators) |

mainly infection control, patient experience and care-associated infections. |

See Appendix F for a full list of SOP KPIs that each audited health service monitors.

VAHI is part of DH. It publishes monthly, quarterly and annual 'Monitor' reports on public health services’ performance against the targets agreed in their SOP. Specifically, VAHI provides quarterly Monitor reports for health service boards.

BHS and DjHS's boards rely on quarterly ‘Monitor’ reports from the Victorian Agency for Health Information (VAHI) to review their performance against most of the SOP quality and safety KPIs that are not reported in their bimonthly and monthly KPI board reports respectively. The frequency of this reporting may be appropriate for some indicators, particularly when considering the resources required to generate additional reporting. However, monitoring the large majority of SOP indicators only quarterly limits these health service boards’ ability to identify and address any underperforming areas in a more timely way.

Since feedback from the audit, DjHS has expanded its monthly activity performance report and now includes most of the unreported SOP KPIs.

Additional indicators

Beyond their SOP indicators, all health service boards regularly monitor additional KPIs in their internal quality and safety KPI reports that they identify as a priority (see Appendix G for a full list of these KPIs). Some common additional KPIs include:

- timeliness in providing hospital discharge summaries

- common clinical incidents, such as pressure injuries, falls and medication errors

- unplanned readmissions for specific community groups or medical conditions.

For MH and PH, who already monitor most of their SOP indicators, these additional KPIs mean their boards have a comprehensive view of their quality and safety performance. Both health services have also aligned their internal quality and safety KPI reports to their clinical governance frameworks to allow them to track their progress against specific clinical governance goals.

In contrast, while BHS and DjHS’s boards monitor their additional KPIs, these KPIs do not address the gaps in their SOP KPI monitoring, which is the minimum monitoring requirement set by DH. This means that BHS and DjHS’s boards are not monitoring sufficient KPIs to have a comprehensive view of service quality and safety.

Additionally, BHS and DjHS do not group and report on KPIs against their local clinical governance framework goals, unlike MH and PH. This means that BHS and DjHS’s boards cannot easily assess if they are meeting their clinical governance goals.

Identifying and investigating poor performance

Health services need to promptly identify quality and safety risks so they can swiftly address them and prevent harm. To do this, health service boards need comprehensive reports that present clear analysis of quality and safety performance, risks and actionable insights. In particular, these reports need to include long-term trend analysis and reasons for underperformance.

Only two of the four audited health services are doing this:

|

Board-level reports at … |

are … |

because … |

As a result, these health service boards … |

|

MH |

comprehensive in highlighting emerging risks and underperformance |

|

can identify emerging quality and safety risks and hold their executives accountable for implementing improvements. |

|

PH |

PH has a low threshold for initiating further investigations, which it calls 'in focus’ analyses, into underperforming KPIs and includes these as part of its board reports. 'In focus' analyses are comprehensive because they identify longer-term trends, include reasons for underperformance and note actions management is taking to improve performance. |

||

|

BHS |

not comprehensive in highlighting emerging risks and accounting for underperformance |

|

are not well equipped to identify and effectively respond to significant quality and safety risks. |

|

DjHS |

|

Recommendations about analysing and responding to performance indicators

| We recommend that: | Response | |

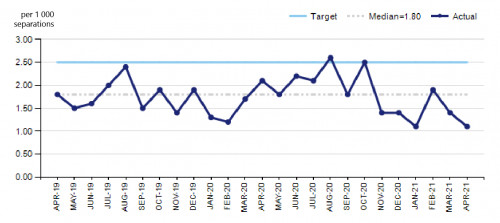

|---|---|---|

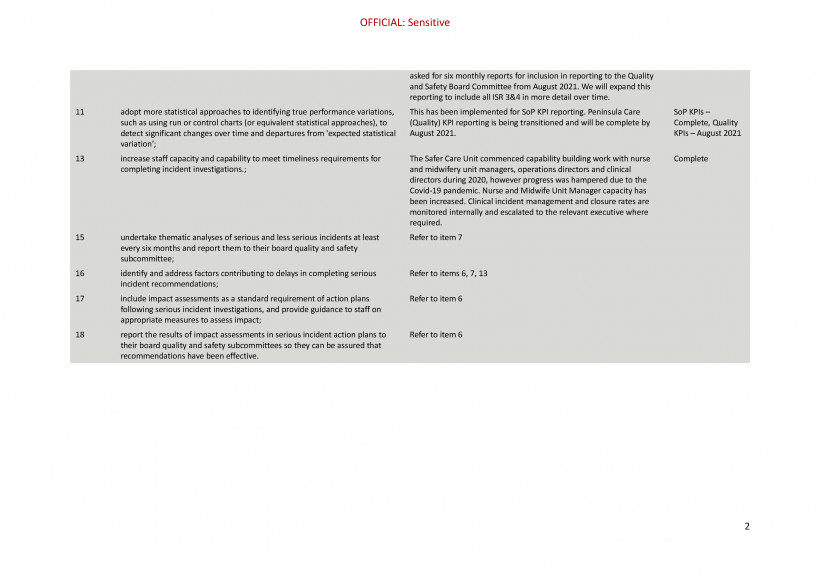

| Peninsula Health, Ballarat Health Services and Djerriwarrh Health Services | 11. adopt more statistical approaches to identifying true performance variations, such as using run or control charts (or equivalent statistical approaches), to detect significant changes over time and departures from expected statistical variation (see Section 4.2) | Accepted by: Peninsula Health, Ballarat Health Services and Djerriwarrh Health Services |

| Ballarat Health Services and Djerriwarrh Health Services | 12. provide more detailed accounts to their boards regarding performance issues, including at a minimum:

|

Accepted by: Ballarat Health Services and Djerriwarrh Health Services |

Investigating and responding to clinical incidents

Incident management and open disclosure policies

All audited health services have incident management policies that provide staff with clear information on their processes for reporting, investigating and responding to incidents.

SCV is administrative office of DH and is responsible for leading quality and safety improvements in the Victorian health system.

However, BHS and DjHS’s policies do not clearly emphasise the support available to staff involved in incidents. DjHS's policy also does not:

- stress that staff must act immediately to contain risks and treat harm

- clearly indicate time frames for completing in-depth case reviews (IDCRs) and Safer Care Victoria's (SCV) recommendations and action plan document.

All audited health services have open disclosure policies and procedures that are broadly consistent with the Australian Commission on Safety and Quality in Health Care's (ACSQHC) Australian Open Disclosure Framework. However, only MH routinely monitors its open disclosure process to ensure each case is promptly and appropriately actioned.

A sentinel event is a wholly preventable adverse event that results in a death or serious harm to a patient.

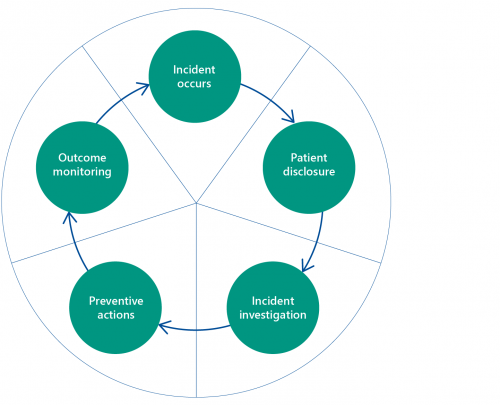

Delays in completing serious incident investigations

All audited health services complete sentinel event investigations within SCV's time frames. However, none of them consistently complete investigations into other serious incidents within timeframes set in their own policies. This means that these health services are not consistently identifying causal and contributing factors early enough, which creates a risk that similar serious incidents will recur. All health services stated that these investigations are delayed because they lack the required staff capacity and capability.

Recommendation about completing serious incident investigations

| We recommend that: | Response | |

|---|---|---|

| Melbourne Health, Peninsula Health, Ballarat Health Services and Djerriwarrh Health Services | 13. increase staff capacity and capability to meet timeliness requirements for completing incident investigations (see Section 4.3). | Accepted by: Melbourne Health, Peninsula Health, Ballarat Health Services and Djerriwarrh Health Services |

No regular thematic analyses of incidents

Health services should undertake regular, service-wide thematic analyses of all serious (ISR 1 and ISR 2) and less serious (ISR 3 and ISR 4) incidents to determine common themes or clusters relating to:

- clinical divisions or units

- common causal or contributing factors.

Not all health services undertake regular analyses of serious incidents. Only MH analyses serious incidents to identify any underlying themes every six months. While BHS analyses serious incidents monthly to identify themes, its analysis does not go into enough detail to identify common contributing factors. For instance, in February 2020, BHS identified that 70 per cent of medication errors involved its ‘administration process’ but did not further examine specific issues in its process for administering medication. BHS's individual monthly analyses also do not identify if common contributing factors recur across multiple months.

PH and DjHS analyse serious incidents for common themes on an ad-hoc basis. For DjHS, this also depends on it having sufficient data available for analysis.

None of the four audited health services undertake regular thematic analyses of less serious incidents. Consequently, they risk not identifying clusters of incidents or common factors underlying individual incidents.

Recommendations about thematic analyses of incidents

| We recommend that: | Response | |

|---|---|---|

| Melbourne Health | 14. undertakes thematic analyses of less serious incidents every six months at a minimum and includes them in its clinical incidents themes and trends report to its board quality and population health subcommittee (see Section 4.3) | Accepted by: Melbourne Health |

| Peninsula Health, Ballarat Health Services and Djerriwarrh Health Services | 15. undertake thematic analyses of serious and less serious incidents at least every six months and report them to their board quality and safety subcommittee (see Section 4.3). | Accepted by: Peninsula Health, Ballarat Health Services and Djerriwarrh Health Services |

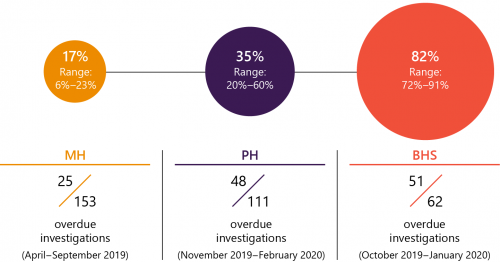

Delays in completing serious incident recommendations

If a health service identifies a risk through a serious incident investigation, it needs to quickly address it to prevent the incident from recurring.

Overdue recommendations

Of the audited health services, only MH had no overdue recommendations from its serious incident investigations. PH, BHS and DjHS are not implementing their recommendations within their own specified time frames.

The reasons for PH and DjHS’s delays in implementing recommendations are unclear. Concerningly, at April 2020 during our audit conduct, the majority (70 per cent) of BHS’s recommendations were overdue, which put patients at risk of known and avoidable harm. BHS advised us that these delays are due to resourcing and skill deficiencies in its centre for safety and innovation team, which it has been working to build and address.

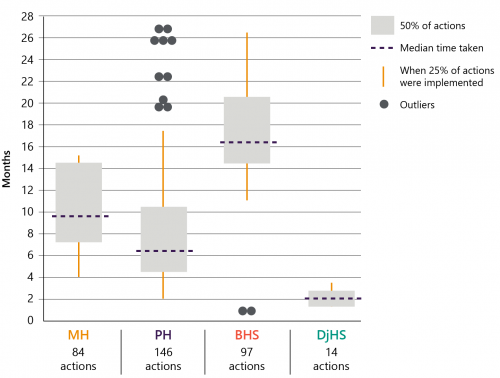

Time taken to implement recommendations

MH and PH were comparable in the typical amount of time they took to implement their recommendations—ten and six months respectively. Broadly, both health services took longer to implement more complex recommendations, which is reasonable.

In contrast, BHS typically took longer to implement its recommendations (16 months) and there is no distinct pattern as to why some recommendations took longer than others. This further indicates that BHS is not acting early enough to prevent future harm to patients.

DjHS took the least amount of time to implement its recommendations—about two months—but many of its actions were to mitigate basic risks, such as keeping walkways clear, rather than more comprehensive actions to address root causes.

Recommendation about implementing serious incident recommendations

| We recommend that: | Response | |

|---|---|---|

| Peninsula Health, Ballarat Health Services and Djerriwarrh Health Services | 16. identify and address factors contributing to delays in completing serious incident recommendations (see Section 4.3). | Accepted by: Peninsula Health, Ballarat Health Services and Djerriwarrh Health Services |

Assessing the impact of implemented recommendations

Following a serious incident investigation, a health service should identify how it will assess if its actions are effectively preventing similar patient harm.

None of the four audited health services consistently identify appropriate measures to assess the effectiveness of their implemented actions. Of the 16 serious incident action plans we assessed across these health services, only two identify measures to assess if a causal or contributing factor recurs.

Two factors contribute to the audited health services’ lack of effective monitoring:

|

For … |

health services do not routinely identify measures to assess the impact of their actions because … |

|

sentinel events |

while SCV requires health services to document a relevant 'outcome measure' to assess the effectiveness of their actions, it does not define or provide any guidance on what an outcome measure should be. As a result, health services tend to only record that they have completed an action and not how they will measure its impact. |

|

other serious incidents |

with the exception of BHS, their IDCR templates do not require staff to include measures to assess the impact of any actions. While BHS's template does require staff to include these measures, it does not provide guidance to staff on how to identify appropriate measures. |

Recommendations about assessing the impact of implemented recommendations

| We recommend that: | Response | |

|---|---|---|

| Melbourne Health, Peninsula Health, Ballarat Health Services and Djerriwarrh Health Services | 17. include impact assessments as a standard requirement of action plans following serious incident investigations and provide guidance to staff on appropriate measures to assess impact (see Section 4.3) | Accepted by: Peninsula Health, Ballarat Health Services and Djerriwarrh Health Services Partially accepted or accepted in principle by: Melbourne Health |

| Melbourne Health, Peninsula Health, Ballarat Health Services and Djerriwarrh Health Services | 18. report the results of impact assessments in serious incident action plans to their board quality and safety subcommittees so they can be assured that recommendations have been effective. (see Section 4.3) | Accepted by: Peninsula Health, Ballarat Health Services and Djerriwarrh Health Services Partially accepted or accepted in principle by: Melbourne Health |

1. Audit context

Health services provide care in complex and high-pressure environments where avoidable harm to patients can occur. Effective clinical governance cultures, systems and processes minimise this risk and reduce the potential for harm.

A health service's clinical governance framework describes the activities it will undertake to minimise harm and maximise the quality of patient care. Health services must meet national and state standards for clinical governance.

This chapter provides essential background information about:

1.1 What is clinical governance?

According to ACSQHC, clinical governance refers to systems and processes that maintain and improve the reliability, safety and quality of healthcare provided to patients. Strong clinical governance results in healthcare that is safe, effective, patient centred and continuously improving.

During 2013 and 2014, there was a cluster of perinatal deaths at DjHS. Subsequent reviews found that there was inadequate clinical governance at the health service and it was not monitoring and responding to adverse clinical outcomes in a timely way.

Following these incidents, the Minister for Health requested the then DHHS to commission the Targeting Zero review.

Victorian health services must meet national and state standards for clinical governance. This includes the VCGF, which SCV developed in response to Targeting Zero's recommendations. This review primarily focused on how the then Department of Health and Human Services (DHHS) was managing, overseeing and monitoring quality and safety across the health system. Where relevant, it also briefly examined health services' clinical governance management, oversight and monitoring.

Targeting Zero's recommendations included establishing new agencies (see Section 1.3) and systems to support more effective clinical governance, including frameworks and projects to improve the practical capability of Victorian health services.

This report

This is the first of two performance audit reports that follow up on the sector's progress since the Targeting Zero review. The review recommended that we assess the sector's progress in implementing recommendations in 2020. This report examines health services' clinical governance systems and processes, with a particular focus on actions taken at the board and executive levels. The second report examines DH's oversight of clinical governance across the health system.

1.2 Clinical governance standards and expectations

National Safety and Quality Health Service Standards

All Australian health services must be accredited against the National Safety and Quality Health Service Standards (NSQHS Standards) to operate. We outline them in Figure 1A.

FIGURE 1A: NSQHS Standards

| Standard | What it looks like |

|---|---|

| 1. Clinical governance | Continuous improvement of the safety and quality of health services and ensuring that health services are patient centred, safe and effective |

| 2. Partnering with consumers | Partnering with consumers to plan, design, deliver, measure and evaluate care |

| 3. Preventing and controlling healthcare-associated infections | Systems to prevent, manage or control healthcare-associated infections and antimicrobial resistance to reduce harm and achieve good health outcomes for patients |

| 4. Medication safety | Systems to reduce the occurrence of medication incidents and improve the safety and quality of medicine use |

| 5. Comprehensive care | Systems and processes to support clinicians to deliver comprehensive care and establish and maintain systems to prevent and manage specific risks of harm to patients during the delivery of healthcare |

| 6. Communicating for safety | Systems and processes to support effective communication with patients, carers and families; between multidisciplinary teams and clinicians; and across health service organisations |

| 7. Blood management | Systems to ensure the safe, appropriate, efficient and effective care of patients’ own blood, as well as other blood and blood products |

| 8. Recognising and responding to acute deteriorations | Systems and processes to respond effectively to patients when their physical, mental or cognitive condition deteriorates |

Source: VAGO, adapted from ACSQHC's NSQHS Standards (second edition), 2017.

Standard 1 specifically relates to clinical governance and requires health services to 'implement a clinical governance framework that ensures that patients and consumers receive safe and high-quality health care'. Health services must use their local clinical governance framework when implementing policies and procedures, managing risks and identifying training requirements for other standards.

Victorian Clinical Governance Framework

Under DH's Policy and Funding Guidelines, all Victorian health services must comply with the VCGF, which requires them to:

- establish a clinical governance framework that complies with the VCGF

- implement their framework by:

- socialising it with their staff

- using it

- improving it.

The VCGF sets out expectations regarding best-practice clinical governance for Victorian public health services. It describes the principles of effective clinical governance, which Figure 1B outlines, and identifies five domains required for implementing these principles, which Figure 1C shows.

FIGURE 1B: The VCGF’s clinical governance principles

| Clinical governance principle | What it looks like |

|---|---|

|

Excellent consumer experience |

Commitment to providing a positive consumer experience every time |

|

Clear accountability and ownership |

|

|

Partnering with consumers |

Consumer engagement and input is actively sought and facilitated |

|

Effective planning and resource allocation |

Staff have access to regular training and educational resources to maintain and enhance their required skill set |

|

Strong clinical engagement and leadership |

|

|

Empowered staff and consumers |

|

|

Proactively collecting and sharing critical information |

|

|

Openness, transparency and accuracy |

Health service reporting, reviews and decision-making are underpinned by transparency and accuracy |

|

Continuous improvement of care |

Rigorous measurement of performance and progress is benchmarked and used to manage risk and drive improvement in the quality of care |

Source: the VCGF.

1.3 Structure of Victoria's health system

Department of Health

From 1 February 2021, DHHS split into DH and the Department of Families, Fairness and Housing.

DH manages the Victorian public health system. It oversees and monitors the state's health services. According to the Victorian health services Performance Monitoring Framework 2019–20 (Performance Monitoring Framework), DH is responsible for:

- partnering with health services to identify and address performance concerns early and effectively

- supporting or intervening to ensure long-term and sustained performance improvement

- making use of available data and intelligence to maximise the depth and breadth of information used to assess health services’ performance

- enhancing health service boards’ skills and capabilities in clinical governance and other required information to ensure high-quality and safe care.

DH sets the rules for all Victorian health services through the Policy and Funding Guidelines. Annual service agreements, or SOPs, outline the Minister for Health's key performance expectations, targets and funding for public health services. DH monitors health services' performance against these expectations and targets using the Performance Monitoring Framework.

Victorian Agency for Health Information

VAHI is a business unit of DH that analyses and shares information across the health system. It does this by identifying measures of patient care and outcomes and using them for public reporting, oversight and clinical improvement. Its key functions include:

- collecting, analysing and sharing data so the community is better informed about health services and health services receive better information about their performance

- providing health service boards, executives and clinicians with the information they need to best serve their communities and provide better, safer care

- providing patients and carers with meaningful and useful information about care in their local community

- improving researchers’ access to data to create evidence that informs the provision of better, safer care.

VAHI provides regular quality and safety reports to public health services, DH and SCV.

Safer Care Victoria

SCV is an administrative office of DH and is responsible for leading quality and safety improvements in the state's health system. SCV’s key functions include:

An administrative office is a public service body that is separate from a government department but reports to the department’s secretary.

- supporting health services to prioritise and improve the safety and quality of patient care by, for instance, developing and providing best-practice resources

- implementing targeted improvement projects across the health service system

- providing independent advice and support to health services to help them respond to and address serious quality and safety concerns

- reviewing health services’ performance, along with DH, to investigate and improve patient safety and quality of care

- monitoring sentinel events reported by health services, as well as the quality of health services’ investigations and how successful their actions are to prevent similar events recurring

- undertaking reviews of systemic safety issues to identify areas for local and system-level improvement.

SCV partners with VAHI to monitor and review individual health services’ performance data and advises health services and DH about areas for improvement.

Public health services

Under the Health Services Act 1988, the Minister for Health appoints independent boards for health services, except for denominational and privately owned public hospitals. Boards must have effective and accountable risk management systems. This includes systems and processes to monitor and improve the quality, safety and effectiveness of the health services provided.

The Performance Monitoring Framework requires public hospitals and health services to:

- partner with DH and other agencies to improve performance at an individual and system-wide level

- promptly report any emerging risks or potential performance issues, including immediate action taken, to DH

- establish and maintain a culture of safety and performance improvement

- submit data and other information, including information about implementing agreed action plans and status update reports, in an accurate and timely way

- collaborate with other health services and system partners to maintain and improve performance and meet community needs.

The VCGF also details the roles and responsibilities of health service boards, chief executive officers (CEO), executives, clinical leaders, managers and staff.

1.4 Audited health services

This audit examined four public health services, which Figure 1D shows. These public health services differ in size, location and clinical capacity. This means they have different local contexts to consider as they adapt their local clinical governance frameworks to the VCGF.

FIGURE 1D: Locations and campuses of audited health services

Source: VAGO.

Djerriwarrh Health Services' operating environment

DjHS has been operating under an administrator since October 2015. Under the Health Services Act 1998, the administrator has and may exercise all of the board’s powers and is subject to all of the board’s duties. Given this, we refer to the administrator as 'the board' in this report.

Since its serious patient safety issues in 2013 and 2014, DjHS has been operating in a significantly challenging environment due to:

- multiple senior executive changes, including its CEO, between 2015 and mid-2018

- lengthy proceedings with the Australian Health Practitioner Regulation Agency and the Victorian Civil and Administrative Tribunal

- the December 2019 announcement that it will potentially voluntarily amalgamate with Western Health, and the subsequent consultations as part of the potential amalgamation process.

2. Establishing and embedding clinical governance frameworks

Conclusion

Not all audited health services have embedded their clinical governance frameworks in their organisations. While their frameworks are generally consistent with the VCGF, only MH and PH use their frameworks to identify specific local quality and safety priorities, raise staff awareness and drive changes in organisational practices.

Our comparison of health services' progress in embedding clinical governance shows the difference between having a document and applying it in practice. Over four years since Targeting Zero, all health services should be 'living' their local clinical governance frameworks, but this is not yet the case.

This chapter discusses:

2.1 Establishing local clinical governance frameworks

Two of the four audited health services—BHS and DjHS—are not yet meeting DH’s requirement to have an established and embedded local clinical governance framework that complies with the VCGF.

A health service’s local clinical governance framework complies with the VCGF when:

- its definition of high-quality care is consistent with the VCGF

- it includes activity domains that are consistent with the VCGF and has corresponding priorities and activities in each domain.

The VCGF defines high-quality care as:

- safe—'avoidable harm during delivery of care is eliminated'

- effective—'appropriate and integrated care is delivered in the right way at the right time, with the right outcomes for each consumer'

- person centred—'people’s values, beliefs and their specific contexts and situations guide the delivery of care and organisational planning. The health service is focused on building meaningful partnerships with consumers to enable and facilitate active and effective participation'.

As Figure 1C shows, the VCGF identifies five underlying activity domains that health services require to achieve high-quality care. It also recommends a range of activities for each domain. Using the VCGF as a guide, health services should:

- reflect on and identify their own priority activities for each VCGF domain

- articulate these activities as priorities in their local clinical governance framework.

Figure 2A shows the progress of each audited health service in meeting these requirements.

FIGURE 2A: Audited health services' progress in implementing clinical governance framework requirements as at June 2020

| MH | PH | DjHS | BHS | |

|---|---|---|---|---|

| Title of local clinical governance framework | Clinical Governance Framework | Peninsula Care Framework (Peninsula Care) | Quality and Safety Framework | Governance Framework |

| Definitions align with the VCGF | ✓ | ✓ | ✓ | ✓ |

| Domains align with the VCGF | ✓ | ✓ | ✓ | ✓ |

| Local priority activities to support achievement in domain areas are included | ✓ | ✓ | ✕ | ✕ |

| Progress status | Actively using the framework and undertaking ongoing monitoring to improve it | Completed and socialised the framework and is identifying how to monitor its implementation | Completed the framework but has not socialised and embedded it | Recently completed the framework and is starting to socialise and embed it |

Source: VAGO.

For PH and BHS, connected care is primarily focused on providing an integrated care pathway. This means care that reflects the patient's various needs, is matched to the different clinicians and services required and works together in a coordinated way.

Three of the audited health services chose to expand their definition of high-quality care, with MH including 'timely care' and PH and BHS including 'connected care' in their frameworks. These additions prompt these health services to prioritise and monitor these aspects of safe and quality care.

Only MH and PH have identified priority activities within each of their activity domains to target their improvement efforts. BHS only identified its priorities in January 2021, and these priorities were still to be approved by BHS's quality care committee (board subcommittee) in early 2021.

DjHS has not identified priority activities in its Quality and Safety Framework. Instead, DjHS relies on a range of other policies and plans to operationalise clinical governance, such as its strategic and operational plans and Safe Practice Framework. Not having this information in a consolidated document makes it difficult for DjHS to identify gaps and for staff to easily understand the range of activities needed to provide safe, effective and person-centred care.

Catalysts and challenges

We found that the audited health services' progress in implementing the VCGF has been affected by:

- leadership, including leadership changes and if leaders champion patient safety and align their local clinical governance framework with the VCGF

- organisational culture, particularly the extent to which health services' executives and managers address bullying and harassment, occupational violence and aggression, and prioritise staff’s physical and psychological safety.

A major challenge for health services in implementing their local clinical governance frameworks has been staff lacking awareness and knowledge about:

- the importance of clinical governance

- how they and their work unit should contribute to delivering high-quality care to patients.

As Figure 2B shows, each of the four health services have experienced different catalysts and challenges associated with implementing the VCGF and establishing and implementing their own clinical governance frameworks.

FIGURE 2B: Catalysts and challenges associated with implementing the VCGF and local clinical governance frameworks

| Health service | Catalysts | Challenges |

|---|---|---|

|

MH |

|

Keeping staff engaged and reminding them about the importance and impact of effective clinical governance in the workplace. MH addresses this through ongoing, multiple channels for raising awareness and feedback |

|

PH |

A new CEO with a focus on clinical governance |

|

|

BHS |

New senior executives and board members recognised the need to improve organisational culture as a key priority |

Lack of awareness across the organisation about the VCGF and its importance as a tool for prioritising activities to strengthen clinical governance |

|

DjHS |

|

|

2.2 Embedding clinical governance frameworks

Clear staff roles and responsibilities

To meaningfully implement a clinical governance framework, a health service needs to express how the framework translates to staff roles and responsibilities. This enables staff to have a clear understanding about how they contribute to providing high-quality care.

All four audited health services' local clinical governance frameworks identify staff roles and responsibilities for achieving high-quality care that broadly align with the VCGF. Health services have also formalised their expectations of staff by adding quality and safety roles and responsibilities to staff position descriptions, which Figure 2C shows. When health services identify defined roles and responsibilities, staff have a clear and consistent understanding of their part in providing high-quality care. They can also be held accountable if their behaviour does not align with stated expectations.

FIGURE 2C: Examples of how audited health services have included quality and safety expectations in staff position descriptions

STEP is an acronym that MH uses to represent its high-quality care goals of safe, timely, effective and person-centred care in its clinical governance framework. MH staff commonly refer to STEP when talking about the health service's clinical governance framework.

| Health service | Position description updates |

|---|---|

|

MH |

Now includes a standard clinical governance framework section. This section states that employees are responsible for delivering safe, timely, effective and person centred (STEP) care and outlines a few ways that employees can achieve this, including:

|

|

PH |

Now includes a standard quality and safety section. This section emphasises that staff are responsible for ensuring:

|

|

BHS |

Now includes an 'occupational health, safety and quality responsibilities' section for each position description. The content in this section varies for executive staff, managers and other employees. Examples of executive staff and the CEO’s responsibilities are:

Examples of managers’ responsibilities are:

|

|

DjHS |

Now includes a standard 'quality improvement' section that outlines a range of responsibilities, including:

|

Source: VAGO.

Building expectations into day-to-day activities

As figures 2D, 2E and 2F show, MH and PH have implemented extensive initiatives to translate their local clinical governance frameworks into practice. These initiatives collectively reinforce a consistent message to staff across the health services and maintain their focus on achieving high-quality care.

In contrast, BHS and DjHS are yet to demonstrate the impact of their clinical governance frameworks on achieving high quality care. This is because they have implemented few initiatives to embed their frameworks. We note BHS and DjHS's initiatives in Figure 3D in Chapter 3.

FIGURE 2D: Common MH and PH initiatives to embed their clinical governance frameworks

| Initiative | MH | PH |

|---|---|---|

| Ward or unit plans/noticeboards | Improvement boards | Peninsula Care placemats |

| Quality and safety huddles (based on clinical governance framework goals) | Ward staff improvement huddles | - |

| Reorganisation of board quality and safety subcommittee meeting agendas | - | In accordance with Peninsula Care goals of safe, personal, effective and connected care |

| Restructuring KPI reporting to prioritise focus areas within clinical governance framework | In accordance with MH's STEP goals | In accordance with Peninsula Care goals of safe, personal, effective and connected care |

| Staff orientation | Half-day presentation to introduce the five domains that support STEP | Introduces Peninsula Care and emphasises staff responsibility to speak up for safety |

Source: VAGO.

FIGURE 2E: Case study: MH's approach to embedding its local clinical governance framework

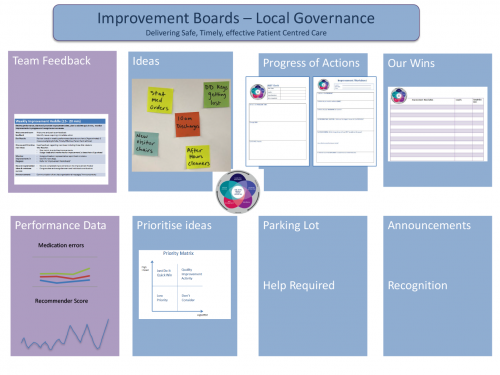

Improvement boards and huddles

Each ward at MH has an ‘improvement board’ on display in its staff meeting room. These noticeboards present a range of information, such as:

- selected STEP performance indicators and corresponding data

- staff suggestions for improvements and their implementation status

- staff recognition.

The wards also hold weekly ‘improvement huddles’ where staff across all disciplines discuss the information presented on the boards.

MH acknowledges that there should be a level of consistent information recorded on improvement boards across wards and in improvement huddles. MH is still in the process of fully embedding this program and working to achieve consistency across its wards.

MH evaluated its improvement huddles pilot and found they increase team engagement because they involve staff across different clinical disciplines coming together. MH found that staff value the opportunity to communicate and exchange views with their colleagues.

Staff also noted that this approach has improved their and their patients’ experience.

The evaluation also found potential barriers to the huddles’ success, such as:

- variable attendance at huddles by the nurse unit manager or assistant nurse unit manager

- heavy reliance on the nurse unit manager or assistant nurse unit manager's capability and capacity to lead the huddles

- duplication with other huddles.

MH is aware that it needs to provide ongoing coaching to staff to embed and sustain this initiative.

The picture below shows a template of what an improvement board looks like.

Note: Parking lot is an area for staff to ‘park’ ideas to consider later.

Source: VAGO, based on information from MH. Image supplied by MH.

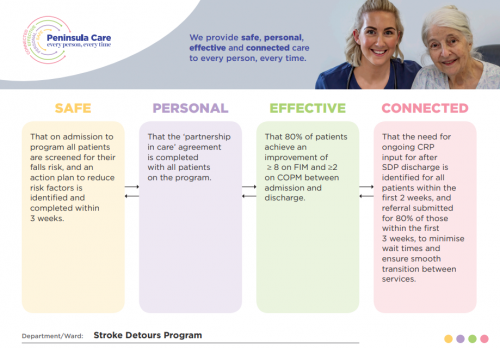

FIGURE 2F: Case study: PH’s approach to embedding its local clinical governance framework

Local Peninsula Care plans and placemats

PH has established a planning and goal-setting process across its local clinical units. Each unit develops a:

- local Peninsula Care plan

- Peninsula Care placemat.

Local units’ Peninsula Care plans identify the specific actions for each unit and categorises them by both the NSQHS Standards and Peninsula Care’s goals. These plans:

- state who is responsible for completing a specific action(s)

- state the action’s expected date of completion

- detail progress against specific actions

- evaluate and include evidence for completed actions.

To share goals and remind staff and visitors how they can contribute to Peninsula Care, local units summarise their Peninsula Care plans and major activities on placemats. Units display placemats publicly.

Peninsula Care plans are a useful tool for local units to identify improvement areas. However, the plans are currently administratively burdensome. PH is examining ways to automate reporting on local plans to reduce the burden on staff.

The following image is an example of a Peninsula Care placemat.

Note: CRP refers to community rehabilitation program. COPM refers to the Canadian occupational performance measure. FIM refers to functional independence measure. SDP refers to the stroke detours program.

Source: VAGO, based on information from PH. Image supplied by PH.

3. Establishing and supporting a positive patient safety culture

Conclusion

MH and PH have made greater improvements to their patient safety cultures since Targeting Zero, than BHS and DjHS. They have done this by embedding their clinical governance frameworks in their organisations and supporting staff to actively uphold patient safety.

In contrast, BHS and DjHS have not implemented an appropriate mix of quality and safety initiatives. In particular, they lack actions to build their staff’s confidence to speak up about safety concerns. By not prioritising and engaging in this work, these health services are not doing enough to ensure patient safety.

This chapter discusses:

3.1 What is patient safety culture?

If a health service has a positive patient safety culture:

- staff feel safe to speak up when they have concerns about patient safety

- the health service is committed to learning from errors

- the health service responds to warning signs early and avoids catastrophic incidents.

DH and health services annually assess patient safety culture through the PMS (this survey did not occur in 2020 due to the coronavirus (COVID-19) pandemic). The survey provides insights into how health service staff perceive their wellbeing in the workplace and the patient safety climate at their health service. Factors that threaten staff wellbeing, including a lack of psychological safety, contribute to a poor patient safety culture.

Three interrelated dimensions contribute to an ongoing positive patient safety culture:

|

The dimension of |

means staff are … |

and it is crucial for establishing and supporting a positive patient safety culture because it … |

|

staff psychological safety |

|

|

|

staff confidence |

|

|

|

staff engagement |

|

|

To promote a positive patient safety culture, health services need to implement initiatives that contribute to these three dimensions. Health services should also evaluate their initiatives to assess if they are achieving the intended outcomes.

3.2 Patient safety culture at audited health services

All Victorian health services should have strengthened their patient safety culture following Targeting Zero as part of the sector's renewed focus on improving care.

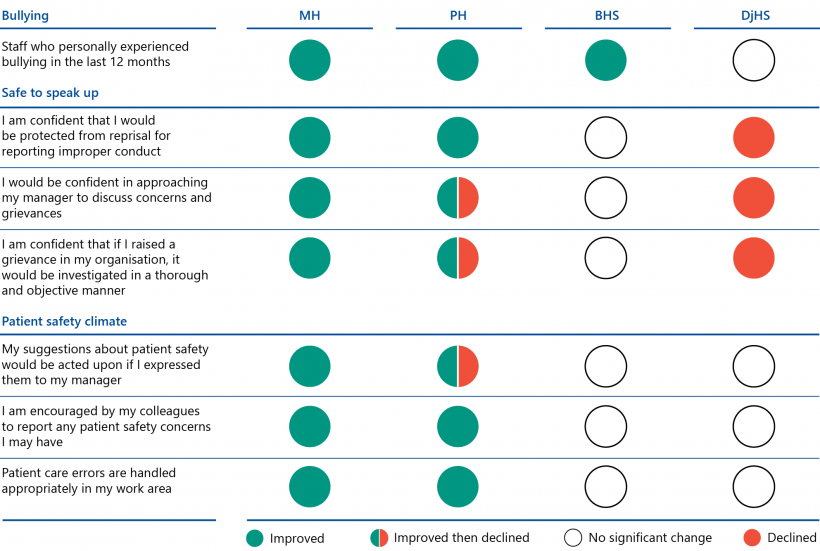

We analysed the audited health services' results for selected PMS questions between 2016 and 2019 on:

- staff experience of bullying

- staff confidence in speaking up

- patient safety climate.

A 95 per cent confidence interval is the range of values that you can be 95 per cent confident that the true value lies within.

We looked for changes in the results between 2016 and 2019 and analysed the data to assess if these changes were statistically significant at a 95 per cent confidence interval. We also looked at the raw results to identify areas where further improvements are necessary.

Improvements since Targeting Zero

Of the four audited health services, only MH has consistently improved its staff’s perceptions of patient safety culture across the PMS measures since 2016. While PH’s results have declined for a minority of patient safety culture measures, it has improved its performance overall. This means that since 2016, MH and PH staff:

- better understand the importance of quality improvement activities

- are more likely to participate in these activities to reduce the risk of patient harm

- are more likely to report incidents.

In contrast, BHS and DjHS have not made substantial improvements since 2016, and for DjHS, some results have deteriorated.

Figure 3A summarises these changes between 2016 and 2019.

FIGURE 3A: Changes in staff’s perceptions of workplace wellbeing in the four audited health services from 2016 to 2019

Source: VAGO, based on PMS data between 2016 and 2019.

Areas for further improvement

The PMS data in Figure 3B shows that the percentage of staff who have experienced bullying at MH, PH and BHS has declined since 2016. All four health services also have fair to strong results regarding:

- how confident staff feel about reporting concerns to their manager

- staff feeling encouraged by colleagues to report concerns

- how management responds to and handles safety issues.

However, DjHS has seen an increase in staff reporting that they have experienced bullying. All four health services, but especially DjHS, also have significant room to improve:

- staff feeling safe from reprisal if they report improper conduct

- staff confidence in the integrity of investigations into safety issues.

This indicates that the audited health services can do more to transparently demonstrate the quality of their investigation processes to staff and address their concerns about reprisal, which the low results strongly suggest is occurring. Unless directly addressed, these factors will continue to act as barriers to these health services developing and maintaining a strong patient safety culture. We discuss the persistent patient safety culture issues at DjHS in more detail in Figure 3C.

FIGURE 3B: The four audited health services’ PMS performance results for 2016 and 2019

| MH (per cent) | PH (per cent) | BHS (per cent) | DjHS (per cent) | |||||

| 2016 | 2019 | 2016 | 2019 | 2016 | 2019 | 2016 | 2019 | |

| Bullying | ||||||||

| Staff who personally experienced bullying in the last 12 months | 26 | 15 | 28 | 18 | 29 | 17 | 20 | 22 |

| Safe to speak up | ||||||||

| I am confident that I would be protected from reprisal for reporting improper conduct | 50 | 59 | 43 | 54 | 47 | 53 | 54 | 35 |

| I would be confident in approaching my manager to discuss concerns and grievances | 74 | 77 | 68 | 73 | 76 | 75 | 78 | 69 |

| I am confident that if I raised a grievance in my organisation, it would be investigated in a thorough and objective manner | 53 | 57 | 49 | 51 | 50 | 49 | 58 | 31 |

| Patient safety climate | ||||||||

| My suggestions about patient safety would be acted upon if I expressed them to my manager | 70 | 75 | 67 | 72 | 72 | 69 | 73 | 67 |

| I am encouraged by my colleagues to report any patient safety concerns I may have | 76 | 81 | 76 | 80 | 78 | 79 | 82 | 77 |

| Patient care errors are handled appropriately in my work area | 70 | 76 | 68 | 74 | 73 | 71 | 68 | 67 |

Note: Green = improved, orange = improved between 2016 and 2018 then declined in 2019, red = declined and black = no statistically significant change.

Source: VAGO, based on PMS data between 2016 and 2019.

FIGURE 3C: Case study: DjHS’s challenges in creating a positive patient safety culture

Since its serious patient safety issues in 2013 and 2014, DjHS has undergone lengthy proceedings with the Australian Health Practitioner Regulation Agency and the Victorian Civil and Administrative Tribunal, as well as significant governance and personnel changes. Within this challenging context, DjHS has not yet made significant progress in establishing a positive patient safety culture.

The 2019 PMS found that only 31 per cent of DjHS’s staff are confident that it would investigate grievances in a thorough and objective manner. This is significantly lower than the median for its peer health services (health services of similar size and patient types), which is 57 per cent.

Interviews we conducted with DjHS staff corroborated the PMS results. Staff expressed reluctance to report issues relating to safety and wellbeing, including inappropriate behaviour like bullying and harassment, because they had previously received poor responses from executives.

DjHS advised us that its staff have a poor understanding of bullying, harassment and their investigation processes and that management is taking steps to address this through extensive staff education.

DjHS also noted that its PMS results for patient safety culture may be influenced by more general staff sentiment. For example, the results could possibly reflect staff unhappiness with an administrative decision to move some staff to ensure teams are co-located.

However, the PMS questions are very specific and the significance of DjHS's variance from its peers’ results means the results warrant acceptance and effort to understand the root causes of staff concerns and respond to their feedback.

As the table below shows, DjHS’s performance has been declining and moving further away from its peer group’s median results since 2016 for PMS measures on:

- staff confidence that they would be protected from reprisal for reporting improper conduct

- staff confidence that grievances would be investigated in a thorough and objective manner.

| PMS question | 2016 (per cent) |

2017 (per cent) |

2018 (per cent) |

2019 (per cent) |

|

|---|---|---|---|---|---|

| I am confident that I would be protected from reprisal for reporting improper conduct | Peer median | 56 | 58 | 60 | 58 |

| DjHS | 54 | 54 | 52 | 35 | |

| I am confident that if I raised a grievance in my organisation, it would be investigated in a thorough and objective manner | Peer median | 59 | 64 | 65 | 57 |

| DjHS | 58 | 57 | 53 | 31 |

DjHS must do more to provide a workplace culture where staff feel safe to speak up. DjHS has informed us that its People and Culture team are undertaking staff focus groups in May and June 2021 to better understand staff experiences.

3.3 Initiatives to create a positive patient safety culture

To promote a positive patient safety culture, health services need to implement initiatives that increase their staff’s psychological safety, confidence to speak up and engagement.

As Figure 3D shows, MH and PH have implemented an extensive mix of initiatives that address these three dimensions. This means that staff at these health services are more likely to be vigilant in detecting and reporting patient safety issues.

However, MH is the only audited health service that has evaluated its initiatives to understand barriers and enablers to fostering a positive patient safety culture. Figure 3E explains how MH identified ways to improve one of its initiatives. PH advised us that it will be evaluating its initiatives to assess their impact on raising staff awareness of bullying and harassment.

BHS and DjHS have not developed or implemented sufficient initiatives across all three dimensions. This is because they have not developed and/or implemented a comprehensive local clinical governance framework. Without a comprehensive mix of initiatives, BHS and DjHS are not doing enough to promote a positive patient safety culture.

Figure 3D provides an overview of the audited health services’ current initiatives categorised by the three dimensions. Appendix D contains further details about these initiatives.

FIGURE 3D: Audited health services’ initiatives for each patient safety culture dimension

| Health service | Safety culture dimensions | ||

| Psychological safety initiatives | Confidence to speak up initiatives | Engagement in safety and improvement activities initiatives | |

|

MH |

|

|

|

|

PH |

|

|

|

|

BHS |

|

Confidential feedback email address |

|

|

DjHS |

|

|

|

Note: Comparisons should not be made between health services having or missing specific initiatives because each health service's circumstances are different. Instead, we assessed if health services have appropriate initiatives against the patient safety culture dimensions.

Source: VAGO.

The initiatives BHS has implemented to date have focused on increasing its staff’s psychological safety. BHS has only recently started to actively engage staff in quality and safety activities. Figure 3D shows that BHS has implemented a pathway for staff to raise concerns confidentially. However, it has not implemented initiatives to equip staff with the skills and confidence to speak up about safety concerns with their peers and managers. BHS advised us that its workplace safety committee will consider possible actions to address this.

DjHS's initiatives focus on increasing staff awareness of patient safety in wards. Like BHS, DjHS has not implemented initiatives to increase its staff’s skills and confidence in speaking up. This is concerning given that DjHS's PMS survey results show that the percentage of staff who feel safe to speak up has declined and DjHS does not have any initiatives in place to address this.

DjHS advised us that while it had developed a ‘safe to speak up’ program, it did not fully implement it due to competing demands associated with:

- a restructure of its people and culture team

- the potential voluntary amalgamation with a larger health service

- the COVID-19 pandemic.

DjHS noted that instead, its health and safety representatives and managers promote the message that it is safe to speak up through various formal and informal meetings with staff. Nonetheless, DjHS recognises that it needs to take a different approach to improve its PMS results.

DjHS also advised us that it is revising its safe to speak up program, which will include staff focus groups to identify the root causes of its cultural issues.

Figure 3E highlights MH's use and review of its weCare system as an example of how a health service can assess the effectiveness of its cultural initiatives.

FIGURE 3E: Case study: how MH evaluated one of its safety culture initiatives

In May 2018, MH reviewed its weCare system to identify opportunities for improvement.

weCare is a system that enables staff to:

- nominate colleagues for specific staff awards

- raise issues about a colleague's behaviour when they do not feel able to or safe to speak up.

In May 2018, staff provided a range of feedback about weCare through MH’s ‘speaking up for safety survey’. The survey found that:

- staff were concerned about the anonymity and confidentiality of the system

- staff used weCare as a first resort instead of attempting to address issues through their line manager

- staff felt distressed after being notified of incidents via weCare if they did not have prior knowledge of the incident and were unable to apologise or resolve the issue

- leadership lacked confidence in the system

- there were deficiencies in weCare’s triage and escalation processes.

After receiving the feedback, MH undertook a number of steps to improve staff confidence in weCare and encourage appropriate use of the system. For instance:

- MH developed a weCare dashboard for senior leaders to promote transparency and build confidence in the system

- leaders started discussing weCare with their teams to demonstrate their confidence in the system and raise awareness of improvements. At the same time, they also started emphasising other methods for raising concerns that can be effective and that weCare is a ‘safety net’.

From MH's perspective, weCare has been effective as a pathway for staff to raise concerns. However, MH also recognises that it needs to continue to monitor use of the system to drive improvements and prevent misuse.

Source: VAGO, based on information from MH.

4. Identifying and responding to quality and safety risks

Conclusion

While health services act when they identify underperformance or emerging risks, they do not consistently identify and respond to quality and safety risks in a timely way. Significant delays in completing serious incident investigations and resulting actions to address underlying issues mean that patients remain at risk of known avoidable harm for too long.

Health service boards, especially at the audited regional and rural health services, are not consistently monitoring enough information across incidents and KPIs to have a comprehensive view of quality and safety risks.

This chapter discusses:

4.1 Clinical incidents

A clinical incident is an event or circumstance that results in unintended or unnecessary harm to a patient.

DH requires health services to classify all clinical incidents according to the degree of harm that occurred to the patient using the ISR scale, which Figure 4A shows. Sentinel events are a subset of ISR 1 incidents because they are the most serious incidents that are wholly preventable and involve death or serious harm.

FIGURE 4A: ISR categories of clinical incidents

| ISR | Degree of impact |

|---|---|

| 1 | Severe or death |

| 2 | Moderate |

| 3 | Mild |

| 4 | No harm or near miss |

Source: SCV.

4.2 Identifying and monitoring quality and safety risks

While there are multiple layers of governance within a health service, the board and its quality and safety subcommittee are accountable for assuring DH, the Minister for Health and the community about their health service’s quality and safety.

Board quality and safety subcommittees:

- include board members

- provide their meeting minutes to the board

- can escalate risks and issues to the board.

As such, throughout this section we refer to both boards and board quality and safety subcommittees as 'boards'.

To have effective oversight, boards should:

- receive regular reports on clinical incidents and quality and safety KPIs

- understand current and emerging risks

- ensure actions occur to address underperformance and clinical risks.

At the four audited health services, the boards' oversight of quality and safety risks is not consistently adequate. There are various gaps at each audited health service. Overall, there are more significant deficiencies in oversight at BHS and DjHS relating to incident investigations, implementing recommendations and holding executives accountable for underperformance against KPIs. None of the four boards receive regular thematic reports on less serious incidents to check for underlying systemic issues.

Monitoring incident investigations and responses

Health service boards need assurance that their executives are quickly identifying and addressing issues that have caused avoidable serious incidents (mainly ISR 1 and ISR 2 incidents) to minimise the chances of them recurring. For less serious incidents (mainly ISR 3 and ISR 4 incidents) that may occur more frequently, they should receive regular thematic reports that identify any common contributing factors and actions taken to address them.

None of the audited health service boards receive comprehensive information on incidents and responses to them. In particular, none of the health services undertake systematic analyses of less serious incidents to gauge emerging risks. Figure 4B shows these gaps.

FIGURE 4B: What board incident reports contain at the audited health services

| MH | PH | BHS | DjHS | |

|---|---|---|---|---|

| Review all new serious incidents | ✓ | ✓ | ✓ | ✓ |

| Monitor the status of serious incident investigations | ✓ | Inadequately | ✕ | Inconsistently |

| Assure actions are implemented to address risks | Inadequately | Inadequately | Inadequately | Inconsistently |

| Regularly review common underlying themes across all incidents | Serious incidents only | ✕ | ✕ | ✕ |

Source: VAGO.

Serious incident investigations

MH’s board

MH's board regularly oversees the status of serious incidents but inadequately oversees how the health service implements actions to address them.

MH's oversight of actions is limited because it only reports on the overall proportion of completed recommendations. There is no rule or requirement for when MH needs to account for overdue recommendations. While MH had no overdue recommendations to report during the course of this audit, MH should ensure it has a clear process to do so to avoid a potential gap in its board’s oversight.

PH’s board

PH’s board regularly oversees the status of serious incident investigations and actions to address risks. However, its oversight is inadequate in the following ways:

- PH's reports do not include overdue investigations and recommendations for incidents that occurred outside of the reporting period. This means that the board does not have a full account of overdue investigations and recommendations.

- PH does not account for why investigations or recommendations are overdue and what rectifying actions are in place. Without this information, the board cannot be assured that the executive is taking steps to minimise delays in completing overdue investigations and recommendations. PH has advised us that it will address this gap.

BHS’s board

While BHS’s board does not regularly oversee the status of serious incident investigations, it does regularly oversee the status of actions to address risks. However, its oversight is not adequate. BHS's reports on implementing actions identify overdue actions but do not consistently account for why they are overdue and what actions are needed for improvement. Hence, the board does not know if these delays are reasonable.

DjHS’s board

DjHS does not provide its board with clear and consistent information on the status of its investigations and recommendations. DjHS provides an ISR 1 and ISR 2 summary report to its board at each meeting. While the intent of this report is to inform the board of serious incidents that occurred during the reporting months, it only sometimes includes the status of investigations and implementation of actions for some incidents. Hence, the board does not receive consistent and clear information on whether there are overdue investigations and actions.

Monitoring quality and safety KPIs

To have a comprehensive view of quality and safety performance and risks across a health service, in addition to monitoring incidents, the board needs to:

- assess quality and safety performance against appropriate KPIs as part of its regular board KPI suite, including:

- at a minimum, the mandatory quality and safety SOP KPIs